Research Institute of Electrical Communication, Tohoku University

KURIKI, Ichiro

Click on title to see details.

Property of visual system and material perception under wide dynamic range of luminance.

Principal Investigator : KURIKI, Ichiro(Research Institute of Electrical Communication, Tohoku University)

Research Institute of Electrical Communication, Tohoku University

KURIKI, Ichiro

Takehiro Nagai (Tokyo Institute of Technology)

Tomoharu Sato (National Institute of Technology Ichinoseki College)

Satoshi Shioiri, Chia-huei Tseng, Sae Kaneko, Kentaro Takakura, Gaku Watanabe (Tohoku University)

Yuta Hosaka, Misaki Hayasaka (Yamagata University)

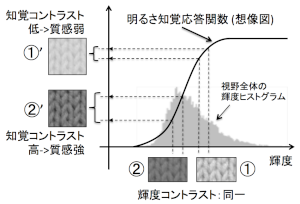

Although glossy highlight on objects has a large impact on surface finish perception and many researchers work on this feature, material feature of the object underneath the glossy finish can be obtained from rather lower luminance range than the highlight. The range of luminance spans over 1:1,000,000 in dynamic range. We found a phenomenon that object images with lower mean luminance yields stronger impression of material perception. Does luminance contrast profile define the material perception (Shitsukan)? We investigate the role of higher/lower luminance range in material perception, in relation to a visual performance (contrast sensitivity), by psychophysical methods. We use a wide-dynamic range luminance display that can yield darker part properly.

Material perception strategy unique to the brain

Principal Investigator : Takehiro Nagai(Department of Information and Communications Engineering, School of Engineering, Tokyo Institute of Technology)

Department of Information and Communications Engineering, School of Engineering, Tokyo Institute of Technology

Takehiro Nagai

Some previous studies suggest the possibility that our visual system perceives surface qualities of objects on the basis of relatively simple image features. This may suggest ingenious information processing in human brain to capture surface qualities even from retinal images created by complex optical processes. How did the brain acquire such simple strategies? One idea is that the brain learned image features useful to discriminate surface features from vast amount of visual experiences similarly to machine learning techniques. Alternatively, there is another possibility that the brain acquired simple information processing for surface features directly leading to survival to capture them as quickly as possible. This latter idea may be unique to the brain. To answer the question above, we address two questions: 1. What surface qualities does the brain perceive on the basis of simple image features? 2. How different are image features employed by the brain and those by machine learning?

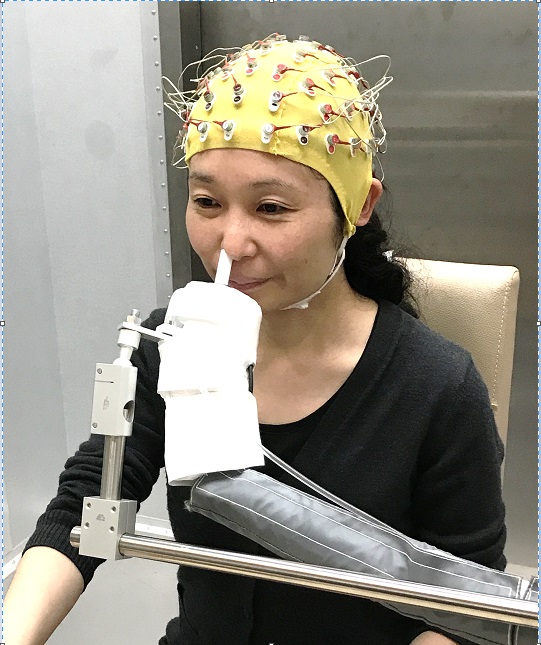

Neural basis of odor quality: The perspective of a human EEG decoding study

Principal Investigator : Masako OKAMOTO(The University of Tokyo Department of Applied Biological Chemistry Graduate School of Agricultural and Life Sciences)

The University of Tokyo Department of Applied Biological Chemistry Graduate School of Agricultural and Life Sciences

Masako OKAMOTO

Kazushige Touhara, Yukei Hirasawa, Toshiki Okumura, Naoki Fukuda, Mugihiko Kato (The University of Tokyo)

We can perceive a wealth of qualities, or "shitsukan", from odors, such as sweet, apple-like or fresh. In humans, the neural basis of odor quality perception has been studied mainly using functional magnetic resonance imaging (fMRI). However, since fMRI has relatively low time resolution, temporal aspects of cortical processing of odor quality has not been well examined. In this project, we use electroencephalography (EEG) that can non-invasively capture human brain activity on the order of milliseconds. By decoding dynamic patterns of evoked EEG signals, we will examine when in the time course of cortical processing, odor quality is coded. We will also explore how odor quality is coded by testing several candidate features, such as amplitude of brain potential, power and phase of brain oscillation. In this way, we aim to elucidate when and how odor quality is coded in human brain.

Neural correlates and perceptual control of tinnitus

Principal Investigator : Hirokazu Takahashi(Graduate School of Information Science and Technology, The University of Tokyo)

Graduate School of Information Science and Technology, The University of Tokyo

Hirokazu Takahashi

Tomoyo Shiramatsu, Naoki Wake, Koutaro Ishizu (Research Center for Advanced Science and Technology, the University of Tokyo)

Sho Kanzaki (School of Medicine, Keio University)

Tinnitus is subjective, phantom perception, which is generated by the brain. Tinnitus often triggers unpleasant emotion, and deteriorates the quality of life severely. The objective of this study is to elucidate the neural mechanisms of tinnitus development in a rodent model and to explore efficacious treatments, e.g., sound therapy, cognitive behavioral therapy, medication, etc., which may change the quality of tinnitus and improve the symptoms. We attempt to behaviorally quantify the symptoms of tinnitus in rats with an acoustic trauma and investigate the neural correlates of tinnitus in the auditory cortex. We then attempt to modify the tinnitus-related cortical activity and tinnitus perception through sound presentation, auditory learning and medications. We hypothesize that tinnitus is malfunction of sound amplification, attention, and emotion. We quantify these functions in behavioral experiments, identify their neural correlates in physiological experiments, and attempt possible intervention.

Mechanism and presentation of unique auditory and tactile texture perceptions by visually impaired and deaf-blind people

Principal Investigator : Miura, Takahiro(National Institute of Advanced Industrial Science and Technology (AIST))

National Institute of Advanced Industrial Science and Technology (AIST)

Miura, Takahiro

Ken-ichiro Yabu (Institute of Gerontology (IOG), The University of Tokyo)

Masatsugu Sakajiri (Tsukuba University of Technology)

Naoyuki Okochi (Research Center for Advanced Science and Technology

(RCAST), The University of Tokyo)

Tohru Ifukube (Institute of Gerontology (IOG), The University of Tokyo)

Junji Onishi (Tsukuba University of Technology)

Yoshikazu Seki (National Institute of Advanced Industrial Science and

Technology (AIST))

Teruo Muraoka (Japan Women's University)

In accord with the Disability Discrimination Legislation enacted in

2016, the Japanese government has made it obligatory to provide

reasonable accommodation to people with disabilities. As a result,

there is an increased need for assistive technology (AT) and

augmentative and alternative communication (AAC) for visually impaired

and deaf-blind individuals. Though many companies and researchers

developed various systems for them so far, improving the quality of

presented information remains in the early stages. Also, because these

people with blindness have unique perceptions, presentation methods

for the sighted cannot be useful. In this study, our purpose is to

demonstrate the unique mechanism of auditory and tactile texture

perceptions among the blind and then to develop the implications of

displaying methods. Our subgoals are as follows:

1. Developing the auditory presentation method of spatial and phonetic

impressions for the blind

2. Developing the tactile presentation method of textures for the blind

3. Investigating multimodal perceptions of auditory and tactile senses

among the blind

4. Developing the tactile presentation method of textures for the deaf-blind

Understanding SHITSUKAN recognition mechanisms in speech perception based on concept of amplitude modulation

Principal Investigator : Masashi Unoki(Japan Advanced Institute of Science and Technology)

Japan Advanced Institute of Science and Technology

Masashi Unoki

Shunsuke Kidani, Maori Kobayashi (Japan Advanced Institute of Science and Technology)

Human beings should be perceived auditory SHITSUKAN of an object from its corresponded acoustical features without predicting room acoustic information when they perceived the objective sound in our daily environments. In the room acoustics, speech transmission index is used to assess quality and intelligibility of the objective sound in terms of modulation characteristics. It can be regarded that physical information of amplitude modulation plays an important role of accounting for perceptual aspect of SHITSUKAN by incorporating concept of modulation perception with the room acoustics. This study aims to understand SHITSUKAN recognition mechanisms in speech perception of the objective sound in room acoustics, based on our previous project D01-7. In particular, the following four points will be done: (1) to construct temporal-frequency analysis/temporal-modulation frequency analysis methods for analyzing instantaneous fluctuation of modulation spectrum to capture temporal fluctuation of amplitude modulation related to SHITSUKAN in speech perception, (2) to investigate what are the physical features related in auditory SHITSUKAN recognition such like tension in speech perception, (3) to investigate whether perceptual aspect related to the room acoustics affects to speech SHITSUKAN recognition of the objective sound, and (4) to investigate whether human beings recognize SHITSUKAN in speech perception of the objective sound by separating out the room acoustic information.

Neural mechanisms of palatability - How the brain recognize smell and flavor of food?

Principal Investigator : Koshi Murata(Division of Brain Structure and Function Faculty of Medical Sciences University of Fukui)

Division of Brain Structure and Function Faculty of Medical Sciences University of Fukui

Koshi Murata

Tomoki Kinoshita (University of Fukui)

Palatable food is essential for enriching our lives. However, it is still unclear whether and how the brain creates subjective feeling of hedonic eating. In this study, we define the palatability as the hedonic quality of food that reinforces eating the food. We reveal neural mechanisms of palatability using neural manipulation experiments in mice.

Transformation of shitsukan memory: Psychological and neural mechanisms

Principal Investigator : Jun Saiki(Graduate School of Human and Environmental Studies, Kyoto University)

Graduate School of Human and Environmental Studies, Kyoto University

Jun Saiki

Hiroki Yamamoto, Hiroyuki Tsuda, Munendo Fujimichi (Kyoto University)

We aim at creating theoretical framework of "Shitsukan cognition" by integrating perception and memory research. Also, by collaborating with Shitsukan technologies, we try to build a basis for visualizing our past subjective experiences in memory. Our previous work revealed that (1) precision of glossiness memory is almost equal to that of perception, (2) glossiness memory has a bias, and (3) ventral visual areas and intraparietal sulcus involve in visual short term memory for glossiness. The current project focuses on transformation of Shitsukan memory, and evaluates various hypotheses by psychophysical and cognitive neuroscience experiments. Transformation in glossiness memory can be described as a selective decay of high spatial frequency components of the image, and we will examine three hypotheses regarding its underlying mechanism: information compression, statistical optimization, and maximization of stimulus value. Moreover, we extend the findings to other visual images such as scenes and artworks by using style transfer technique.

Functional domain organization for processing texture information in the primate ventral visual pathway

Principal Investigator : Ichiro Fujita(Osaka University Graduate School of Frontier Biosciences and Center for Information and Neural Networks)

Osaka University Graduate School of Frontier Biosciences and Center for Information and Neural Networks

Ichiro Fujita

Mikio Inagaki, Gaku Hatanaka, Shintaro Miike, Yuto Yasui (Osaka University)

Koji Ikezoe (University of Yamanashi)

Shinji Nishimoto (National Institute of Information and Communications Technology)

Visual texture information is processed along the ventral visual pathway. It proceeds gradually from the primary visual cortex (V1) through mid-tier areas (V2, V4) to the inferior temporal cortex. These areas consist of subareas or domains such as blobs and inter-blobs in V1 and three types of stripes in V2. It is unknown which of these domains process texture information. Answers to this question will advance our understanding of how texture information is combined with or segregated from other visual cues such as shape, color, motion, and depth. We address this question by applying intrinsic signal optical imaging combined with 2-photon calcium imaging techniques to the visual cortex in non-human primates.

Elucidation of the mechanism underlying haptic texture perception and development of a novel approach for Alzheimer’s disease early detection

Principal Investigator : Jiajia YANG(Graduate School of Interdisciplinary Science and Engineering in Health Systems, Okayama University)

Graduate School of Interdisciplinary Science and Engineering in Health Systems, Okayama University

Jiajia YANG

Jinglong Wu, Yoshimichi Ejima (Okayama University)

Koji Abe, (Okayama University Medical School)

Yinghua Yu (Japan Society for the Promotion of Science, Okayama University)

Astereognosis is the inability to identify an object by touch and it is also called as tactile agnosia. Previous studies indicated that lesions of the parietal lobe or the posterior association areas would contribute astereognosis. However, our recent findings revealed that the patients with Alzheimer's disease (AD) without any parietal lobe damage also showed very similar symptoms as the astereognosis patients. This would be the sign that astereognosis appears to be an associative disorder like AD in which the disconnections between tactile information and memory in the brain. Therefore, we focused on the astereognosis developing mechanism to elucidate the neural substrates of haptic texture processing. Then, we will investigate how the developing of AD correlated to the impairment of haptic function and develop a novel approach for AD early detection.

The acquisition process of SHITSUKAN recognition: perceptual environment and affective information

Principal Investigator : So Kanazawa(Department of Psychology, Japan Women's University)

Department of Psychology, Japan Women's University

So Kanazawa

Jiale Yang (The University of Tokyo; The Japan Society for the Promotion of Science)

Wakayo Yamashita (Kagoshima University)

Yusuke Nakashima, Yuta Ujiie (Research and Development Initiative, Chuo University)

Kazuki Sato (Japan Women's University)

Shuma Tsurumi (Chuo University)

The focus of our project is to comprehensively trace the developmental process in human infancy of SHITSUKAN recognition. Specifically, the project sets out several interrelated objectives: 1) to investigate how perceptual environment affects the individual difference of SHITSUKAN recognition; 2) to figure out when and how infants develop an emotional response related to SHITSUKAN recognition; 3) to explore the development of SHITSUKAN multisensory integration; 4) to examine the development of visual receptive field structure which supports the SHITSUKAN recognition. To achieve these objectives, we will utilise both behavior methods (e.g. preferential looking paradigm and habituation paradigm) and psychophysiological methods (e.g. NIRS and EEG). Furthermore, we will use head mounted cameras to record the environmental differences in infants, and analyse the statistical properties of the environmental differences.

Comparative and developmental approach to Shitsukan recognition in chimpanzees and human children

Principal Investigator : Tomoko Imura(Department of Psychology, Japan Women's University)

Department of Psychology, Japan Women's University

Tomoko Imura

Masaki Tomonaga (Kyoto University)

Nobu Shirai (Niigata University)

We aimed to explore universality and uniqueness among species and the influence of cultural and social experience on Shitsukan perception by comparing chimpanzees with human children and adults. We will examine Shitsukan discrimination ability using a wide range of materials, such as differences in food quality and skin texture that are related to the survival of living organisms, and differences in metal and glass prepared by computer graphics. We will not only clarify Shitsukan with regard to the texture of materials but will also examine the development and evolutionary foundation of shitsukan recognition including aesthetic evaluation, such as preferences for shitsukan and chromatic composition.

Neural mechanism of liquid motion perception

Principal Investigator : Takahisa Sanada(Faculty of Software and Information Science Iwate Prefectural University)

Faculty of Software and Information Science Iwate Prefectural University

Takahisa Sanada

Goal of this study is to reveal neural representation of liquid motion. It has been reported that local motion speed is the critical feature for apparent liquid viscosity and image statistics related to spatial smoothness is important for impression of liquidness (Kawabe et al., 2015).

We have been studied neural representation of liquidness in higher visual areas. In this study, we explore how viscosity is encoded in the cortical visual areas.

Electrophysiological recording will be conducted in higher visual areas such as MT/FST, which receive local motion signals. By comparing neural properties for liquidness/viscosity across areas, functional role of these areas for liquid motion perception will be studied.

Individual differences of preferences for auditory stimuli: A combinatory study of deep learning and function MRI

Principal Investigator : Junichi Chikazoe(National Institute for Physiological Sciences, Supportive Center for Brain Research)

National Institute for Physiological Sciences, Supportive Center for Brain Research

Junichi Chikazoe

Information of external world is gathered through sensory organs, which is transformed to abstract value information in our brain. A stimuli which is extremely attractive to an individual can be disgusting to another, suggesting individual differences in preferences of sensory stimuli. Considering that value estimation processes transform qualitative information to quantitative information, individual differences in preferences should stem from the neural underpinnings of sense-to-value transformation as well as Shitsukan recognition. Previous functional MRI studies demonstrates that multiple brain regions including the orbitiofrontal cortex, accumbens nucleus and striatum are associated with value processing, however, it still remains unclear how sensory information is transformed to value. Based on the fact that the artificial neural network for object recognition fairly approximates hierarchical information processing in the ventral visual pathway of the primate brain (Güçlü et al., 2015), we hypothesize and test that transformation from auditory input to abstract value could be approximated with similar hierarchical information processing implemented by artificial neural network. First, we will build a prototype of audio-to-value converter by transfer learning of neural network for auditory processing with the information of play counts of music clips which are uploaded to the Free Music Archive. Next, we will build the individual-optimized audio-to-value converter, based on the behavioral and neuroimaging data during value estimation for music. With this procedure, we will specify the correspondence of representations between each layer and each brain region at the level of individual. Furthermore, we will integrate the audio-to-value converter and information maps of neural representations across individuals. We will specify brain regions which could be the cause of individual differences while specifying the common neural correlates for audio-to-value transformation across individuals.

Effect of amygdala feedback on neural representation in the ventral visual cortex

Principal Investigator : Naohisa Miyakawa(National Institutes for Quantum and Radiological Science and Technology, Department of Functional Brain Imaging Research)

National Institutes for Quantum and Radiological Science and Technology, Department of Functional Brain Imaging Research

Naohisa Miyakawa

Takafumi Minamimoto, Yuji Nagai (National Institutes for Quantum and Radiological Science and Technology)

Kesuke Kawasaki (Department of Otorhinolaryngology:Head and Neck Surgery, University of Pennsylvania School of Medicine)

Taku Banno (The University of Tokyo)

Noritaka Ichinohe, Wataru Suzuki (National Center of Neurology and Psychiatry)

Toshiki Tani (Institute of Physical and Chemical Research)

Primate ventral visual cortex process information related to quality and category of sought object. Although it possesses extensive feedback connection within the cortex and from subcortical structures such as amygdala, current models of object vision are based on hierarchical feedforward connections, neglecting the direct and indirect feedback routes. We aim to identify the role of direct and indirect feedback from the amygdala to the ventral visual cortex on the representation of "Shitsukan" information by combining wide-range neural recording with electrocorticogram (ECoG) and targeted chemogenetic technique utilizing Designer Receptor Exculsively Activated by Designer Drug (DREADD) and Positron Emission Tomography (PET).

A study on neural representation of cognitive dissonance about Shitsukan using deep neural network

Principal Investigator : Ryusuke Hayashi(National Institute of Advanced Industrial Science and Technology (AIST))

National Institute of Advanced Industrial Science and Technology (AIST)

Ryusuke Hayashi

When human face image is morphed into other artificial object, then halfway-morphed images cause eerie feeling in viewers, known as "the uncanny valley effect", which accompanies cognitive dissonance about Shitsukan. On the other hand, the recent advances in multimodal machine learning achieve to represent images and texts in a joint embedding space using deep neural network (DNN), suggesting that neural representation in the visual areas can also be grounded with semantic symbols via the visual representation of DNN. The aim of this project is to elucidate the neural dynamics in a situation when cognitive dissonance about Shitsukan occurs. To this end, we will record neural activities from the multiple visual areas of monkey brain in response to image-morphing and analyze when, where and what information are processed along the ventral visual stream by mapping neural representation of each time point and each recording site with visual-semantic representation using DNN.

Neuronal mechanisms underlying perception of Shitsukan in facial images in the anterior temporal lobe

Principal Investigator : Yasuko Sugase-Miyamoto(National Institute of Advanced Industrial Science and Technology (AIST))

National Institute of Advanced Industrial Science and Technology (AIST)

Yasuko Sugase-Miyamoto

Narihisa Matsumoto, Kazuko Hayashi (National Institute of Advanced Industrial Science and Technology)

Kenji Kawano, Kenichi Miura (Kyoto University)

In our social life, we can easily recognize a face and its individual identity, age and physical conditions from the configuration of facial parts and Shitsukan information. This ability is assumed to be based on neural processing in areas participating in the analysis of visual characteristics of faces in the temporal lobe. To understand neural processing of Shitsukan information of faces, neural activity in the macaque temporal lobe was examined, and the effect of Shitsukan on responses of face responsive neurons was observed. We focus on revealing temporal processing stages of shitsukan information by a population of face responsive neurons using both facial and object images, and roles of the population activity on perception of the shitsukan information.

Deep generative models of complex sensation

Principal Investigator : Haruo Hosoya(Advanced Telecommunication Research Institute International (ATR))

Advanced Telecommunication Research Institute International (ATR)

Haruo Hosoya

Rajani Raman, Yukiyasu Kamitani(Advanced Telecommunications Research Institute International)

Aapo Hyvärinen(University College London)

When we perceive complex sensation (Shitsukan) from textural materials, we presumably capture some statistical structure in the stimulus. Numerous psychophysical and neuroscientific studies have pursued the law of complex sensation in various domains, where a key theoretical component is a generative model that can systematically generate stimuli giving such complex sensation. However, while prior studies used a theory designed for a particular domain like textures, such theory is usually not applicable to other domains. In this study, based on the principal investigator's previous work to relate learning theories with visual neural representation, we will develop a new, general framework of generative models for complex sensation based on unsupervised learning. In particular, we intend to achieve this goal by incorporating recent machine-learning approaches called deep generative models and applying our model to random stimulus generation and estimation of stimulus statistics in relation to perception, thus contributing to the science of complex sensation.

Simulating and Capturing Fluid-li ke Foods

Principal Investigator : Yonghao Yue(Aoyama Gakuin University)

Aoyama Gakuin University

Yonghao Yue

Kentaro Nagasawa (The University of Tokyo)

Ryohei Seto (Kyoto University)

Masato Okada (The University of Tokyo)

We focus on how to capture and reproduce the SHITSUKAN (texture) of fluid-like foods that appear in our daily life, with the primal application to computer graphics. Being able to realistically represent fluid-like foods is important for their delicious appearance, as well as analyzing and understanding how human recognizes SHITSUKAN (texture) because we can usually distinguish between different types of foods or their freshness by their visual cues (motion, color, and surface shape).

In our daily cooking, it is common for us to mix foods and/or seasoning with different rheological properties, which in turn results in a wide variety of material properties of the mixture. For the reproduction of such a cooking scenery, it would therefore be important to handle foods with different materials in a unified way, or in other words, a unified rheological (constitutive) model that is able to represent the material properties of a mixture from the property of each constituent under an arbitrary mixing ratio. It would be equally important to capture the material property right after we mixed foods, because the property would heavily depend on the freshness. Hence, we also seek for a portable measurement system, which we shall call a pocket rheometer.

In this project, we aim at developing a unified rheological (constitutive) model for fluid-like foods, as well as an instant measurement system for estimating the parameters needed for reproducing the material in a physically based simulator. We seek for such a unified way of measuring and simulating fluid-like foods for the reproduction of their SHITSUKAN.

Controlling food perception and eating behavior via shitsukan augmentation based on projection mapping techniques

Principal Investigator : Takuji Narumi(The University of Tokyo)

The University of Tokyo

Takuji Narumi

Michitaka Hirose, Tomohiro Tanikawa, Yuji Suzuki, Nami Ogawa (The University of Tokyo)

Yuji Wada, Kazuya Matsubara (Ritsumeikan University)

The purpose of this research is to establish a novel method to modify food perception and eating behaviors via shitsukan augmentation based on projection mapping techniques. By focusing on eating behaviors that utilize multiple senses, we aim to model the effect of shitsukan change via visual augmentation on not only multi-sensory perception including taste, scent and texture perception but also our behavior. Therefore, in this projcet, the influences of shitsukan change via visual augmentation on the estimation of texture, temperature, taste quality, quantity, freshness etc. of foods are investigated. At the same time, changes in actual multisensory perception, palatability and food intake along with the change in the estimation are also investigated. Based on this knowledge, we establish a method to control shitsukan of food with projection mapping techniques for realizing intended food perception and eating behavior.

Hyperreal display reproducing SHITSUKAN and shape based on high-speed projection

Principal Investigator : Yoshihiro Watanabe(Tokyo Institute of Technology)

Tokyo Institute of Technology

Yoshihiro Watanabe

This research focuses on a new display reproducing SHITSUKAN. In particular, our target is not a traditional computer graphics in a flat monitor but a SHITSUKAN reproduction technology merged in a real world. This type of technological direction involves various interesting challenges. This challenges are originated in the fact that SHITSUKAN is based on the human perception. This means we can explore the reality for the new SHITSUKAN display based on perceptual identity instead of physical identity. The essence of this research is in the engineering design shaping "hyper-real" that is unreality extremely close to real. Based on background, we plan to fuse two types of SHITSUKAN reproduction technologies including a dynamic projection mapping and physical-object built-in display. Our purpose is to realize a display fully reconfiguring both shape and SHITSUKAN.

Perceptual Material Editing by Apparent BRDF Manipulation for Complex Shape Surface

Principal Investigator : Toshiyuki Amano(Wakayama University)

Wakayama University

Toshiyuki Amano

Koki Murakami (Wakayama University)

Shun Ushida (Osaka Institute of Technology)

Goshiro Yamamoto (Kyoto University)

The object's color which we perceive can be affected not only by object's reflectance property but also by the illumination that projects onto it. In previous invited research, we realized innovative display technique which manipulates apparent BRDF by the Lightfield feedback system on the Nishijin textile which contains gold and silver leaves. However, we cannot apply the technique to the complex shaped object since the object blocks illumination projection and its measurement for the occlusion. In addition to this problem, the target should be fixed to keep pixel correspondence between the projection and the measurement. In this research, we attempt to solve these problems by pixel mapping update technique from the shape information and displacement sensing. Furthermore, we will realize advanced Shitsukan manipulation that alters the reflection property to a structural-color such as Readen or Pearl not only glossy or translucent expression on the surface.

High resolution tactile display with electrical stimulus and electrovibration situlus

Principal Investigator : Hiroki Ishizuka(Osaka University)

Osaka University

Hiroki Ishizuka

Hidenori Yoshimura, Shoki Kitaguchi (Kagawa University)

In this project, we develop a hybrid type tactile display with electrovibration stimulus and electrical stimulus. We also optimize the presented stimulus with finite element method (FEM) analysis. In order to provide more realistic tactile sensation, the tactile display which can present multiple tactile stimulus is strongly required. The electrovibration tactile display can only provide horizontal frictional stimulus. Therefore, we combined the electrovibration tactile display with an electrical tactile display which can provide tactile sensation such as pressure or vibration. Additionally, we develop a FEM model to simulate the use of the tactile display and optimize the input signal to the tactile display with the FEM model.

Image-Based Modeling of Transparent and Translucent Objects via Multispectral Imaging for SHITSUKAN Editing

Principal Investigator : Takahiro Okabe(Kyushu Institute of Technology)

Kyushu Institute of Technology

Takahiro Okabe

Ryo Matsuoka, Chao Wang (Kyushu Institute of Technology)

Transparent and translucent objects evoke rich and unique SHITSUKAN due to the interactions between incident rays and those objects such as reflection, refraction, scattering, and absorption. Since those interactions depend on the wavelength of an incident ray, we study image-based modeling of transparent and translucent objects via multispectral imaging for SHITSUKAN editing. Specifically, we estimate the geometric properties of transparent objects and the photometric and spectral properties of translucent objects by using multispectral light sources and multiband cameras. Then, we make use of those estimated physical properties for photorealistic image synthesis and editing.

Solution to inverse problems of tactile displays based on experimental analyses with tactile samples

Principal Investigator : MIKI, Norihisa(Keio University, Department of Mechanical Engineering)

Keio University, Department of Mechanical Engineering

MIKI, Norihisa

The objective of this project is to construct a universal solution to the inverse problems of tactile displays; to deduce the input for the tactile displays to provide given tactile sensation to the users. In conventional research on tactile displays, tactile sensations evoked by the tactile display with given input are investigated, which is a forward problem. However, in order to practically apply the tactile displays to human-computer-interaction, the inverse problems must be solved, where quantification of the tactile sensation is mandatory.

In this work, we begin with solving the inverse problems to find the input to display the primary tactile properties, which are the surface roughness, stiffness, thermal conductivity, and surface energy. We use "encode and present" of our mechanotactile display using microfabricated tactile samples. Combination of the primary tactile properties culminates in the tactile sensation of real samples. Contribution of each input for the primary tactile property to the tactile sensation of the real samples depends on the characteristics of our tactile perception. Can the input be added linearly or non-linearly? What are the weight for each input in the addition? The answers for these questions will lead to the universal solution to tactile inverse problems, and more ambitiously, to understanding of the essence of tactile sensation, e.g., what makes "tree" to be perceived as "tree".