Click on title to see details.

A01 Shitsukan Mechanism

A01-1 Visual, auditory and tactile SHITUKAN recognition mechanisms based on signal modulations

Principal Investigator : Shin’ya Nishida(Kyoto University)

Principal Investigator

Kyoto University

Shin’ya Nishida

Co-Investigator

NTT Communication Science Labs, Nippon Telephone and Telegraph Corp

Shigeto Furukawa

Co-Investigator

Tohoku University

Kyoko Suzuki

Co-Investigator

University of Electro-Communications

Keiji Yanai

Co-Investigator

Tkahiro Kawabe, Kazushi Maruyama, HO Hsin-Ni, Shinobu Kuroki, Kentaro Yasu, Masataka Sawayama, Daiki Fukiage, Takumi Yokosaka, Kenichi Hosokawa, Jan Jaap van Assen, Hsin-I Liao, Yuki Terashima, Takuya Takumi(NTT Communication Science Labs)

Yuka Oishi(Niigata University of Health and Welfare)

Chifumi Iseki(Yamagata University)

Yumiko Saito, Nobuko Kawakami, Marie Oyafuso(Tohoku University)

Mikio Shinya(Toho University)

Hirotsugu Yamamoto, Tomoharu Ishikawa(Utsunomiya University)

Masashi Nakatani(Keio University)

Chihiro Hiramatsu, Naoko Takahashi, Mori Yuki, Ryosuke Oda, Xu Chen (Kyushu University)

Outline

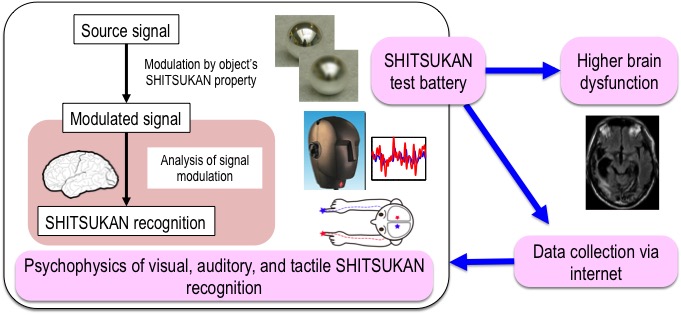

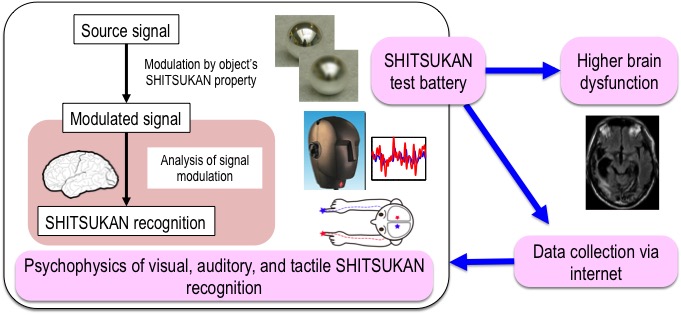

SHITSUKAN information of an object is embedded in the complex modulation patterns of sensory signals produced by interactions among object, environment and observer. Based on this idea, we attempt to identify the signal modulations responsible for a variety of visual, auditory and tactile SHITSUKAN, and the underlying recognition mechanisms. From the analysis of the physical properties of SHITSUKAN stimuli and the corresponding SHITSUKAN responses by human observers, we will reveal the functional and computational mechanisms of SHITSUKAN recognitions. With regard to research strategy, in addition of rigorous psychophysical experiments with limited number of observers in experimental rooms, we will develop a SHITSUKAN test battery to collect a large size of psychological response data through the internet. Adapting the same test battery to evaluate SHITSUKAN recognition performance of higher-brain dysfunction patients, we will gain insights into the neural mechanism of SHITSUKAN recognition.

Achievements

- Kawabe T, Fukiage T, Sawayama M, & Nishida S: Deformation Lamps: A Projection Technique to Make Static Objects Perceptually Dynamic, ACM Transactions on Applied Perception, Vol. 13, No. 2, Article 10, 2016.

- Kuroki S, Watanabe J, Nishida S: Neural timing signal for precise tactile timing judgments, Journal of Neurophysiology, 115(3): 1620-1629, 2016.

- Ho, H.-N, Iwai D, Yoshikawa Y, Watanabe J, Nishida S: Impact of hand and object colors on object temperature perception, Temperature, 2 (3): 344-345, 2015.

- Kawabe T, Maruya K, Nishida, S.: Perceptual transparency from image deformation, Proceedings of the National Academy of Sciences of the United States of America, 112(33):E4620-7, 2015.

- Kuroki S, Hagura N, Nishida S, Haggard P, Watanabe J: Sanshool on The Fingertip Interferes with Vibration Detection in a Rapidly-Adapting (RA) Tactile Channel, PLOS one, 2016.

- Rider A, Nishida S, & Johnston A: Multiple-stage ambiguity in motion perception reveals global computation of local motion directions, Journal of Vision, 16 (15):7, 1-11, 2016.

- Kaneko T, Yanai K: Event Photo Mining from Twitter Using Keyword Bursts and Image Clustering, Neurocomputing, Vol.172, pp.143?158, 2016.

- Ho H.-N., Sato K, Kuroki S, Watanabe J, Maeno T, Nishida S: Physical-Perceptual Correspondence for Dynamic Thermal Stimulation, IEEE Transactions on Haptics, 2016.

- Hisakata R,Nishida S, Johnston A: An adaptable metric shapes perceptual space, Current Biology, 26: 1911-1915, 2016.

- Kawabe T, Nishida S: Seeing jelly: judging elasticity of a transparent object, ACM Symposium on Applied Perception (SAP), 2016.

- Matsuo S, Yanai K : CNN-based Style Vector for Style Image Retrieval, ACM International Conference on Multimedia Retrieval (ICMR), 2016.

- Shimoda W, Yanai K: Weakly-Supervised Segmentation by Combining CNN Feature Maps and Object Saliency Maps, IAPR International Conference on Pattern Recognition (ICPR), 2016.

- Shimoda W, Yanai K: Distinct Class Saliency Maps for Weakly Supervised Semantic Segmentation, European Conference on Computer Vision (ECCV), 2016.

- Keiji Yanai, Ryosuke Tanno and Koichi Okamoto, Efficient Mobile Implementation of A CNN-based Object Recognition System, ACM Multimedia, 2016.

- Furukawa S.: Natural combinations of interaural time and level differences in realistic auditory scenes, 39th ARO Midwinter Meeting, 2016.

- Kuroki S, Watanabe J & Nishida S: Integration of vibrotactile frequency information beyond the mechanoreceptor channel and somatotopy, Scientific Reports 7, 2758, 1-13, 2017.

- Hayashi R, Watanabe 0, Yokoyama H & Nishida S: A new analytical method for characterizing non-linear visual processes with stimuli of arbitrary distribution: theory and applications, Journal of Vision, 17(6):14, 1-20, 2017.

- Yokosaka T, Kuroki S, Watanabe J & Nishida S: Linkage between free exploratory movements and subjective tactile ratings, IEEE Transactions on Haptics, 10 (2): 217-225, 2017.

- Sawayama M, Adelson, EH, & Nishida S: Visual wetness perception based on image color statistics, Journal of Vision, 17(5):7, 1-24, 2017.

- Sawayama M, Nishida S, & Shinya M: Human perception of sub-resolution fineness of dense textures based on image intensity statistics, Journal of Vision, 17 (4):8, 1-18, 2017.

- Yanai K: Unseen Style Transfer Based on a Conditional Fast Style Transfer Network, International Conference on Learning Representation Workshop Track, 2017.

- Fukiage T, Kawabe T & Nishida S: Hiding of Phase-Based Stereo Disparity for Ghost-Free Viewing Without Glasses, ACM SIGGRAPH 2017(Technical Papers).

- Kuroki S & Nishida S: Human tactile detection of within- and inter-finger spatiotemporal phase shift of low-frequency vibrations, Scientific Reports, 8, 4288, 1-10, 2018.

- Sawayama M & Nishida S: Material and shape perception based on two types of intensity gradient information, PLOS Computational Biology.

- Yokosaka T, Kuroki S, Watanabe J & Nishida S: Estimating tactile perception by observing explorative hand motion of others, IEEE Transactions on Haptics.

A01-2 Neural Basis of Shitsukan Perception and the Mechanisms of its Learning and Modulation

Principal Investigator : Hidehiko Komatsu(Brain Science Institute, Tamagawa University)

Principal Investigator

Brain Science Institute, Tamagawa University

Hidehiko Komatsu

Co-Investigator

The University of Tokyo

Isamu Motoyoshi

Co-Investigator

Advanced Telecommunications Research Institute International

Takeaki Shimokawa

Co-Investigator

National Institute for Physiological Sciences

Naokazu Goda

Co-Investigator

Isao Yokoi (National Institute for Physiological Sciences)

Akiko Nishio, Mika Baba, Harumi Saito, Akihiko Masaoka, Kei Kanari (Tamagawa University)

Outline

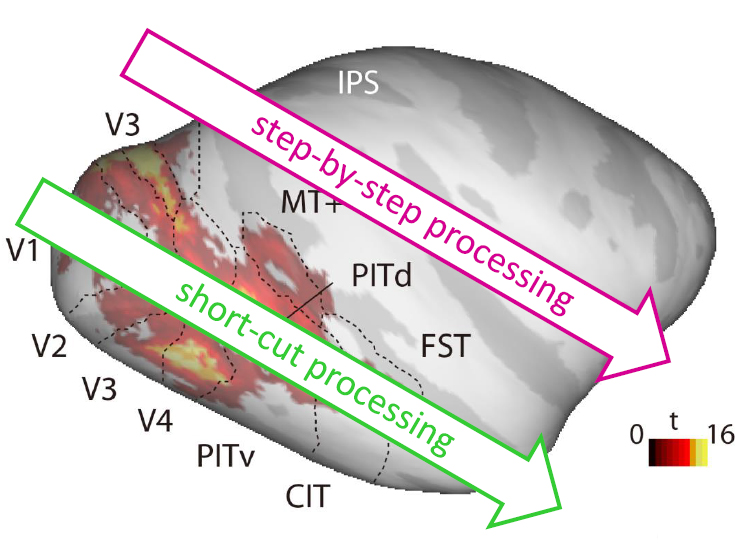

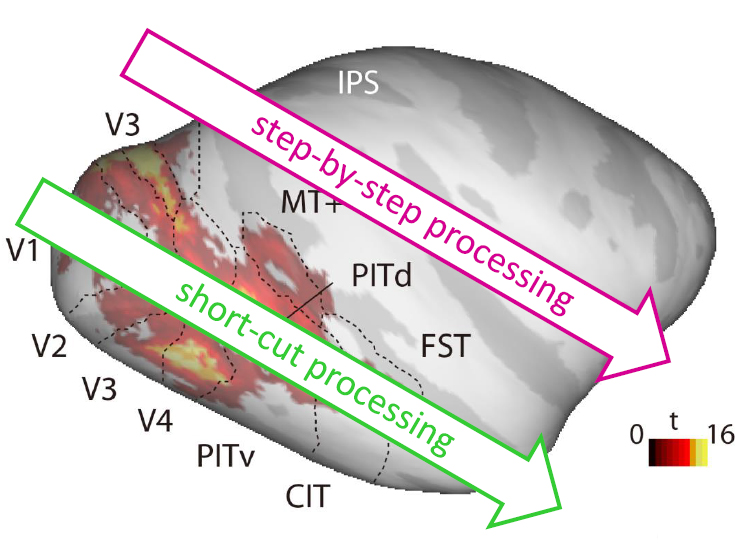

Shitsukan perception include functions such as material discrimination of objects and evaluation of surface qualities such as gloss and translucency. Our goal is to understand the multi-layered processing underlying Shitsukan perception, that consists of a step-by-step processing of Shitsukan information in the hierarchically organized visual system as well as a short-cut processing that takes advantage of relatively simple visual features that correlate with specific Shitsukan of objects. For this purpose, we will conduct psychophysical and behavioral experiments to study the properties of these two types of processings in humans and monkeys, and we will study brain activities related to the representation of Shitsukan information and how they will be modulated by the learning and experience.

Achievements

- Sato H, Motoyoshi I, Sato T: On-Off asymmetry in the perception of blur, Vision Research, 120(1): 5-10, 2016.

- Sato H, Motoyoshi I, Sato T: On-off selectivity and asymmetry in apparent contrast: An adaptation study, Journal of Vision, Vol. 16, issue 1, Article 14 (11pages), 2016.

- Suzuki W, Banno T, Miyakawa N, Abe H, Goda N, Ichinohe N: Mirror neurons in a New World monkey, common marmoset, Frontiers in Neuroscience, Vol. 9, Article 459、(14pages), 2015.

- Yang J, Kanazawa S, Yamaguchi MK, Motoyoshi I. : Pre-constancy vision in infants, Current Biology, 25(24): 3209-3212, 2015.

- Goda N, Yokoi I, Tachibana A, Minamimoto T, Komatsu H: Crossmodal Association of Visual and Haptic Material Properties of Objects in the Monkey Ventral Visual Cortex, Current Biology, 26:928-934, 2016.

- Sanada TM, Namima T, Komatsu H: Comparison of the color selectivity of macaque V4 neurons in different color spaces, J Neurophysiology, in press, 2016.

- Kondo, D. & Motoyoshi, I.: Spatiotemporal properties of multiple-color channels in the human visual system, Journal of Vision, Vol. 16, issue 9, Article 14 (13pages), 2016.

- Motoyoshi, I. & Mori, S.: Visual processing of emotional information in natural surfaces., European Conference on Visual Perception 2016.

- Motoyoshi, I. & Mori, S.: Image statistics and the affective responses to visual surfaces., Annual Meeting of Vision Sciences Society 2016.

- Okazawa G, Tajima S, Komatsu H: Gradual development of visual texture-selective properties between macaque areas V2 and V4, Cerebral Cortex.

A01-3 Neural Mechanism of Affective Response with Shitsukan Perception

Principal Investigator : Takafumi Minamimoto(National Institutes for Quantum and Radiological Science and Technology)

Principal Investigator

National Institutes for Quantum and Radiological Science and Technology

Takafumi Minamimoto

Co-Investigator

National Center of Neurology and Psychiatry

Manabu Honda

Co-Investigator

Toyohashi University of Technology

Kowa Koida

Co-Investigator

Yuichi Yamashita, Yui Matsumoto, Mitsuhiro Miyamae, Osamu Ueno (National Institute for Physiological Sciences)

Makiko Yamada, Toshiyuki Hirabayashi, Yuji Nagai (National Institutes for Quantum and Radiological Science and Technology)

Outline

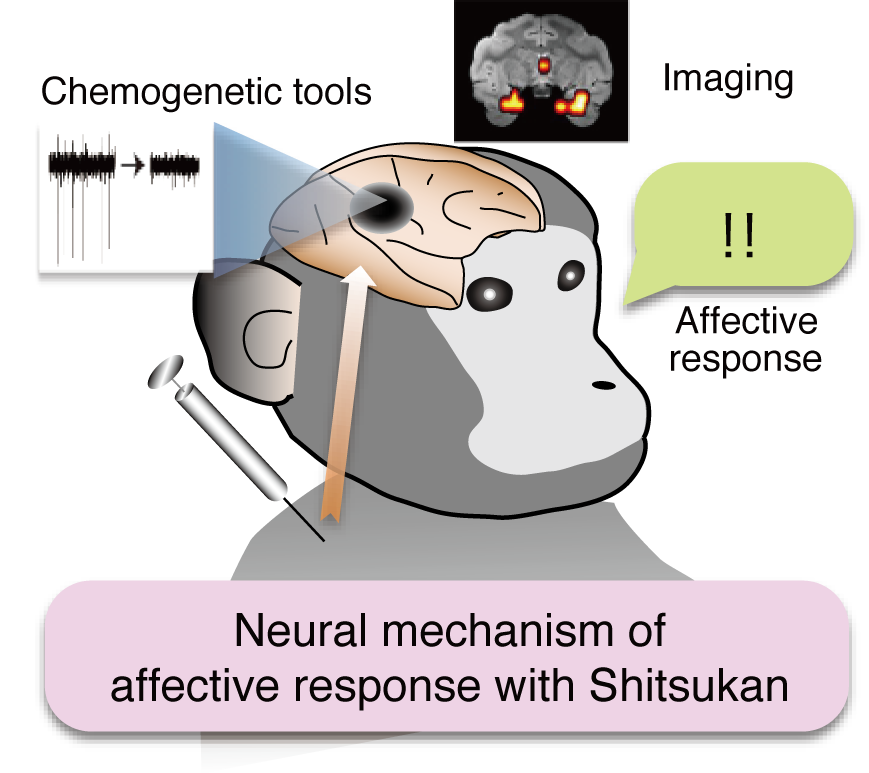

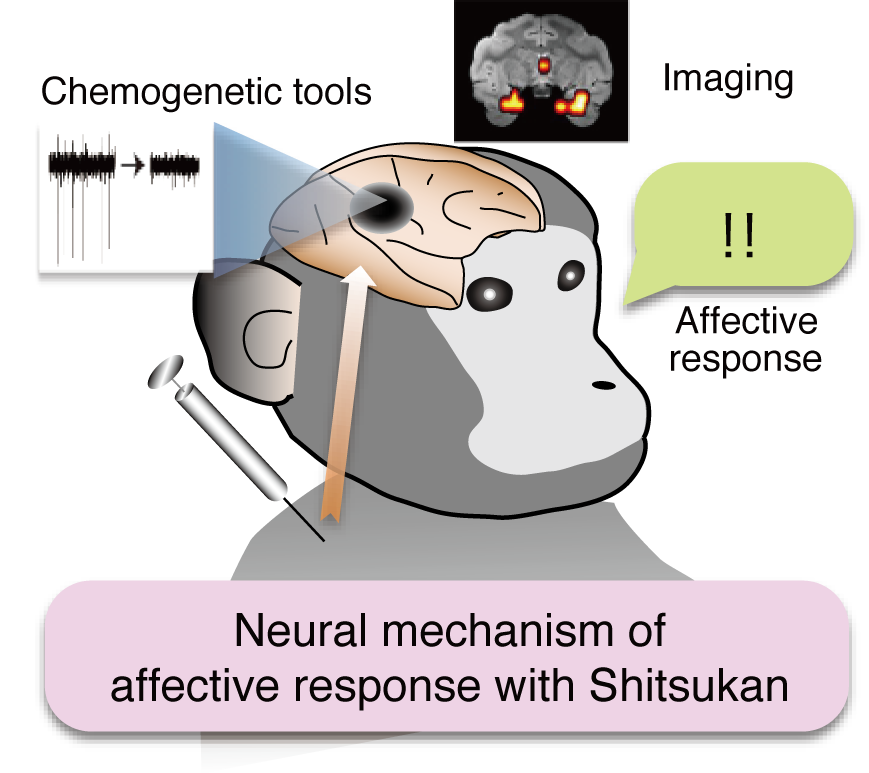

Shitsukan perception often accompanies emotional/affective response as well as valuation and decision. Our goal is to understand the neural mechanism of affective response concomitant with Shitsukan perception, especially underlying neural circuit and molecular mechanism. We also aim to identify critical Shitsukan properties for specific affective response. We will develop integrated research on affective neuroscience using Shitsukan resources, including 3D objects and presentation methods. In addition to unique scientific outcomes, our research may provide industrially useful means for affective evaluation and improving Shitsukan quality.

Achievements

- Hori Y, Ogura J, Ihara N, Higashi T, Tashiro T, Honda M, Hanakawa T: Development of a removable head fixation device for longitudinal behavioral and imaging studies in rats, J Neurosci Methods, 264:11-15, 2016.

- McCairn KW, Nagai Y, Hori Y, Ninomiya T, Kikuchi E, Lee JY, Suhara T, Iriki A, Minamimoto T, Takada M, Isoda M, Matsumoto M: A primary role for nucleus accumbens and related limbic network in vocal tics, Neuron 89(2):300-307, 2016.

- Oi, N, Tokunaga M, Suzuki M, Nagai Y. Nakatani Y, Yamamoto N, Maeda J, Minamimoto T, Zhang M-R, Suhara T, Higuchi M: Development of Novel PET Probes for Central 2-Amino-3-(3-hydroxy-5-methyl-4-isoxazolyl) propionic acid (AMPA) Receptors, J. Med. Chem, 58(21):8444-8462, 2015.

- Yamada H, Inokawa H, Hori Y, Pan X, Matsuzaki R, Nakamura K, Samejima K, Shidara M, Kimura M, Sakagami M, Minamimoto T: Characteristics of fast-spiking neurons in the striatum of behaving monkeys, Neurosci Res, 105:2-18, 2015.

- Hori Y, Ihara N, Teramoto N, Kunimi M, Honda M, Kato K, Hanakawa T: Noninvasive quantification of cerebral metabolic rate for glucose in rats using (18)F-FDG PET and standard input function, J Cereb Blood Flow Metab 35: 1664-1670 2015.

- Koga K, Yoshinaga M, Uematsu Y, Nagai Y, Miyakoshi N, Shimoda Y, Fujinaga M, Minamimoto T, Zhang MR, Higuchi M, Ohtake N, Suhara T, Chaki S: TASP0434299: A novel pyridopyrimidin-4-one derivative as a radioligand for vasopressin V1B receptor, J Pharmacol Exp Ther, 357:495-508, 2016.

- Eldridge MA, Lerchner W, Saunders RC, Kaneko H, Krausz KW, Gonzalez FJ, Ji B, Higuchi M, Minamimoto T, Richmond BJ.: Chemogenetic disconnection of monkey orbitofrontal and rhinal cortex reversibly disrupts reward value., Nature Neuroscience, 19: 37-39, 2015.

- Nagai Y, Kikuchi E, Lerchner W, Inoue KI, Ji B, Eldridge MAG, Kaneko H, Kimura Y, Oh-Nishi A, Hori Y, Kato Y, Hirabayashi T, Fujimoto A, Kumata K, Zhang MR, Aoki I, Suhara T, Higuchi M, Takada M, Richmond BJ, Minamimoto T: PET imaging-guided chemogenetic silencing reveals a critical role of primate rostromedial caudate in reward evaluation, Nat Commun, 7: 13605, 2016.

- Yamashita Y, Fujimura T, Katahira K, Honda M, Okada M, and Okanoya K: Context sensitivity in the detection of changes in facial emotion, Scientific Reports, 6: 27798-27798, 2016.

- Ji B, Kaneko H, Minamimoto T, Inoue H, Takeuchi H, Kumata K, Zhang MR, Aoki I, Seki C, Ono M, Tokunaga M, Tsukamoto S, Tanabe K, Shin RM, Minamihisamatsu T, Kito S, Richmond BJ, Suhara T, Higuchi M: DREADD-expressing neurons in living brain and their application to implantation of iPSC-derived neuroprogenitors, J. Neuroscience, 36:11544 ?11558, 2016.

- H. Sawahata, S. Yamagiwa, A. Moriya, T. Dong, H. Oi, Y. Ando, R. Numano, M. Ishida, K. Koida T. Kawano: Single 5?μm diameter needle electrode block modules for unit recordings in vivo, Scientific Reports, 6: 35806, 2016.

- Koga K, Maeda J, Tokunaga M, Hanyu M, Kawamura K, Ohmichi M, Nakamura T, Nagai Y, Seki C, Kimura Y, Minamimoto T, Zhang MR, Fukumura T, Suhara T, Higuchi M: Development of TASP0410457 (TASP457), a novel dihydroquinolinone derivative as a PET radioligand for central histamine H3 receptors, EJNMMI Research, 6:11, 2016.

- Wang L, Mori W, Cheng R, Yui J, Hatori A, Ma L, Zhang Y, Rotstein BH, Fujinaga M, Shimoda Y, Yamasaki T, Xie L, Nagai Y, Minamimoto T, Higuchi M, Vasdev N, Zhang MR, Liang SH: Synthesis and preclinical evaluation of sulfonamido-based [11C-Carbonyl]-carbamates and ureas for imaging monoacylglycerol lipase, Theranostics, 6(8):1145-1159, 2016.

- Yamashita Y, Kawai N, Ueno O, Matsumoto Y, Oohashi T, Honda M: Induction of prolonged natural lifespans in mice exposed to acoustic environmental enrichment, Scientific Reports.

- Oshiyama C, Sutoh C, Miwa H, Okabayashi S, Hamada H, Matsuzawa D, Hirano Y, Takahashi T, Niwa S, Honda M, Sakatsume K, Nishimura T, Shimizu E: Gender-specific associations of depression and anxiety symptoms with mental rotation, Journal of Affective Disorders.

- Kurashige H, Yamashita Y, Hanakawa T and Honda M: A Knowledge-Based Arrangement of Prototypical Neural Representation Prior to Experience Contributes to Selectivity in Upcoming Knowledge Acquisition, Frontiers in Human Neuroscience, 12: 111-1-111-19, 2018.

- Morikawa Y, Yamagiwa S, Sawahata H, Numano R, Koida K, Ishida M, Kawano T: Ultrastretchable Kirigami bioprobes, Advanced Healthcare Materials, 7 (3) 2018.

- Ando Y, Sawahata H, Kawano T, Koida K, Numano R: Fiber bundle endomicroscopy with multi-illumination for three-dimensional reflectance image reconstruction, J. Biomed. Opt., 23(2), 020502, 2018.

- Kubota Y, Yamagiwa S, Sawahata H, Idogawa S, Tsuruhara S, Numano R, Koida K, Ishida M, Kawano T: Long nanoneedle-electrode devices for extracellular and intracellular recording in vivo, Sensors and Actuators B: Chemical, 258, 1287 - 1294, 2018

- Yokoi I, Tachibana A, Minamimoto T, Goda N, Komatsu H: Dependence of behavioral performance on material category in an object grasping task with monkeys, J. Neurophysiol.

- Cheng R, Mori W, Ma L, Alhouayek M, Hatori A, Zhang Y, Ogasawara D, Yuan G, Chen Z, Zhang X, Shi H, Yamasaki T, Xie L, Kumata K, Fujinaga M, Nagai Y, Minamimoto T, Svensson M, Wang L, Du Y, Ondrechen M, Vasdev N, Cravatt B Fowler C, Zhang MR, Liang S: In vitro and in vivo evaluation of 11C-labeled azetidine-carboxylates for imaging monoacylglycerol lipase by PET imaging studies, J. Med. Chem., 61(6):2278-2291, 2018.

A01-4 Modeling Complex Appearance of Real objects for Shitsukan Analysis

Principal Investigator : Imari Sato(National Institute of Informatics)

Principal Investigator

National Institute of Informatics

Imari Sato

Co-Investigator

Nara Institute of Science and Technology

Ysuhiro Mukaigawa

Co-Investigator

The University of Tokyo

Yoichi Sato

Co-Investigator

National Institute of Informatic

Yinqiang Zheng

Co-Investigator

Tokyo University of the Arts

Yuichiro Taira

Co-Investigator

Nara Institute of Science and Technology

Hiroyuki Kubo

Co-Investigator

Mihoko Shimano, Tatsuki Tahara (National Institute of Informatic)

Hiroki Okawa, SUBPA-ASA Art, Yuta Asano(Tokyo Institute of Technology)

Nie Shijie(The Institute of Statistical Mathematics)

Outline

The goal of our project is modeling photometric properties of real objects surfaces for analyzing Shitsukan perception. In computer vision, various techniques have been proposed for accurately modeling and rendering an object's appearance under complex lighting. In this project, we extend such analysis further to model more complicated photometric properties such as translucency, structured color, fluorescence and use them for Shitsukan analysis.

Achievements

- Zheng Y, Fu Y, Lam A, Sato I, Sato Y: Separating Fluorescent and Reflective Components by Using a Single Hyperspectral Image, Proc. International Conference on Computer Vision(ICCV2015), 2015.

- Tanaka K, Mukaigawa Y, Kubo H, Matsushita Y, Yagi Y: Recovering Inner Slices of Layered Translucent Objects by Multi-frequency Illumination, IEEE Transactions on Pattern Recognition and Machine Intelligence, Volume: 39, Issue: 4, pp.746-757, 2016.

- Fu Y, Lam A, Sato I, Sato Y: Adaptive spatial-spectral dictionary learning for hyperspectral image restoration, International Journal of Computer Vision, 122(2) 228-245, 2016.

- Fu Y, Lam A, Sato I, Okabe T, Sato Y.: Reflectance and Fluorescence Spectral Recovery via Actively Lit RGB Images, IEEE Transactions on Pattern Recognition and Machine Intelligence, 38(7) 1313-1326, 2016.

- Fu Y, Lam A, Sato I, Okabe T, Sato Y.: Separating Reflective and Fluorescent Components using High Frequency Illumination in the Spectral Domain, IEEE Transactions on Pattern Recognition and Machine Intelligence, 38(5) 965-978, 2016.

- Ozawa K, Sato I, Yamaguchi M: Hyperspectral Photometric Stereo for Single Capture, Journal of the Optical Society of America A (JOSA A), 34(3):384-394, 2017.

- Lu F, Sato I, Sato Y: SymPS: BRDF Symmetry Guided Photometric Stereo for Shape and Light Source Estimation, IEEE Transactions on Pattern Recognition and Machine Intelligence, 2017.

- Okawa H, Zheng Y, Lam A, Sato I: Spectral Reflectance Recovery with Interreflection Using a Hyperspectral Image, Proc. Asian Conference on Computer Vision (ACCV 2016), 2016.

- Kobayashi Y, Morimoto T, Sato I, Mukaigawa Y, Tomono, Ikeuchi K.: Reconstructing Shapes and Appearances of Thin Film Objects Using RGB Images, Proc. Computer Vision and Pattern Recognition (CVPR2016), 2016.

- Fu Y, Zheng Y, Sato I, Sato Y.: Exploiting Spectral-Spatial Correlation for Coded Hyperspectral Image Restoration, Proc. Computer Vision and Pattern Recognition (CVPR2016). 2016.

- Subpa-asa A, Fu Y, Zheng Y, Amano T, Sato I: Direct and Global Component Separation from a Single Image using Basis Representation, Proc. Asian Conference on Computer Vision (ACCV 2016), 2016.

- Asano Y, Zheng Y, Nishino K, Sato I: Shape from Water: Bispectral Light Absorption for Depth Recovery, Proc. European Conference on Computer Vision (ECCV2016), 2016.

- Ohara N, Zheng Y, Sato I, Nakamura T, Yamaguchi M: SIMULTANEOUS LINEAR SEPARATION AND UNMIXING OF FLUORESCENT AND REFLECTIVE COMPONENTS FROM A SINGLE HYPERSPECTRAL IMAGE, Proc. International Conference on Image (ICIP2016), 2016.

- Tanaka K, Mukaigawa Y, Kubo H, Matsushita Y, Yagi Y: Recovering Transparent Shape from Time-of-Flight Distortion, Proc. Computer Vision and Pattern Recognition (CVPR2016), 2016.

- M. Shimano, H. Okawa, Y. Asano, R. Bise, K. Nishino, I. Sato: Wetness and Color from A Single Multispectral Image, IEEE Conference on Computer Vision and Pattern Recognition 2017.

- Nie S, Gu L, Y, Zheng, Lam A, Ono N, Sato I: Deeply Learned Filter Response Functions for Hyperspectral Reconstruction, IEEE Conference on Computer Vision and Pattern Recognition (CVPR2018).

B01 Shitsukan Mining

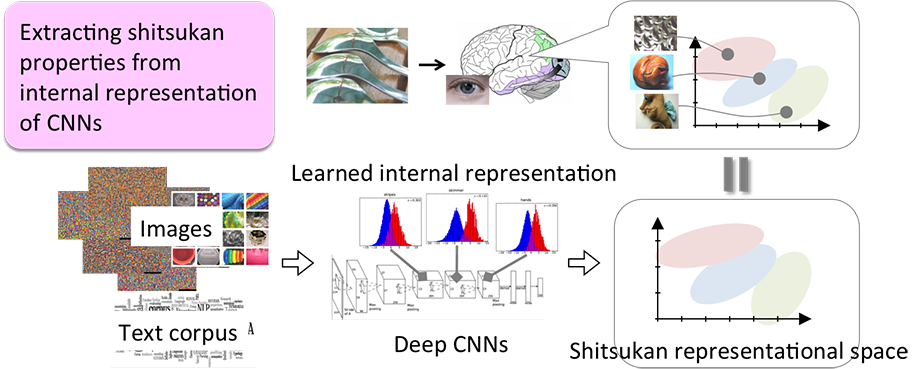

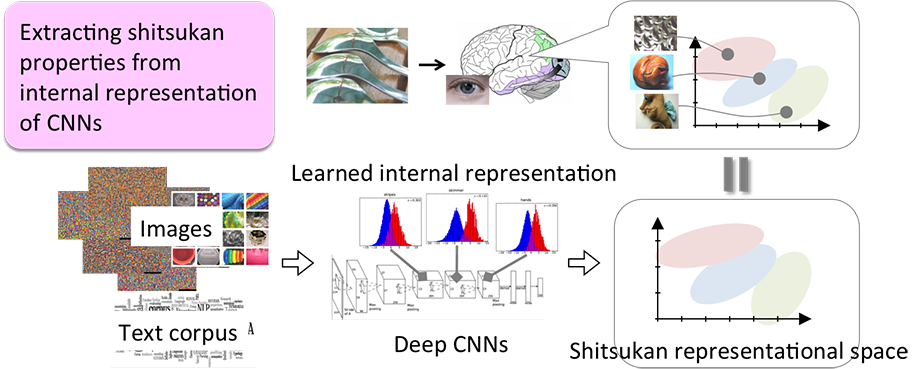

B01-1 Deep Learning of Shitsukan Representational Space Using Images and Languages

Principal Investigator : Takauyki Okatani(Tohoku University)

Principal Investigator

Tohoku University

Takauyki Okatani

Co-Investigator

Niigata University

Keita Kawasaki

Co-Investigator

Xing Lui(Tohoku University)

Outline

We aim to develop a computer system that recognizes surface qualities, or shitsukan, of an object from its image. Toward this end, we study methods for constructing a representational space of shitsukan information by performing deep learning that uses a large-scale dataset. We plan to mine representations for various shitsukan properties by performing a type of transfer learning using convolutional neural networks (CNNs). Our approach is to train CNNs for a "proxy task" of shitsukan recognition and then extract internal representations (activation patterns of internal layers for an input image) learned by the CNNs, by which we will construct a shitsukan representational space. This is based on our hypothesis that CNNs that have learned to solve the proxy task will automatically acquire sufficient factors for representing shitsukan information in their learned internal representation. Shitsukan is difficult to represent verbally, which makes it hard to transmit and share between human and human or between human and machines. The ultimate goal of the research project is to "quantify" such shitsukan and create a new research field by further extending the shitsukan representational space that will be developed in this project.

Achievements

- Orikasa T, Okatani T: A Gaze-reactive Display for Simulating Depth-of-field of Eyes When Viewing Scenes with Multiple Depths, IEICE TRANSACTIONS on Information and Systems, E99.D(3), 739-746, 2015.

- Akashi Y and Okatani T: Separation of reflection components by sparse non-negative matrix factorization, Computer Vision and Image Understanding, 146: 77-85, 2015.

- Shuohao Li, Kota Yamaguchi, and Takayuki Okatani: Attention to Describe Products with Attributes, International Confernece on Machine Vision Applications, 2016.

- Muraoka, M., Maharjan, S., Saito, M., Yamaguchi, K., Okazaki, N., Okatani, T., & Inui, K: Recognizing Open-Vocabulary Relations between Objects in Images, Pacific Asia Conference on Language, Information and Computation, 2016.

- Sun Z, Ozay M, Okatani T: Design of Kernels in Convolutional Neural Networks for Image Classification, European Conference on Computer Vision 2016.

- Vittayakorn S, Umeda T, Murasaki K, Sudo K, Okatani T, Yamaguchi K: Automatic Attribute Discovery with Neural Activations, European Conference on Computer Vision 2016.

- Yashima T, Okazaki N, Inui K, Yamaguchi K, Okatani T, Learning to Describe E- Commerce Images from Noisy Online Data, Asian Conference on Computer Vision 2016.

- Zhang Y, Ozay M, Liu X, Okatani T: Integrating Deep Features for Material Recognition, International Conference on Pattern Recognition, 2016.

- Liu X, Ozay M, Zhang Y, Okatani T: Learning Deep Representations of Objects and Materials for Material Recognition, Vision Science Society 16th Annual Meeting, 2016.

- Masanori Suganuma, Mete Ozay, and Takayuki Okatani: Exploiting the Potential of Standard Convolutional Autoencoders for Image Restoration by Eovlutionary Search, International Conference on Machine Learning (ICML).

- Duy-Kien Nguyen and Takayuki Okatani: Improved Fusion of Visual and Language Representations by Dense Symmetric Co-Attention for Visual Question Answering, Computer Vision and Pattern Recognition (CVPR2018).

- Zhun Sun, Ozay Mete, Yan Zhang, Xing Liu, and Takayuki Okatani: Feature Quantization for Defending Against Distortion of Images, Computer Vision and Pattern Recognition (CVPR2018).

- Mete Ozay, and Takayuki Okatani: Training CNNs with Normalized Kernels, Thirty-Second AAAI Conference on Artificial Intelligence.

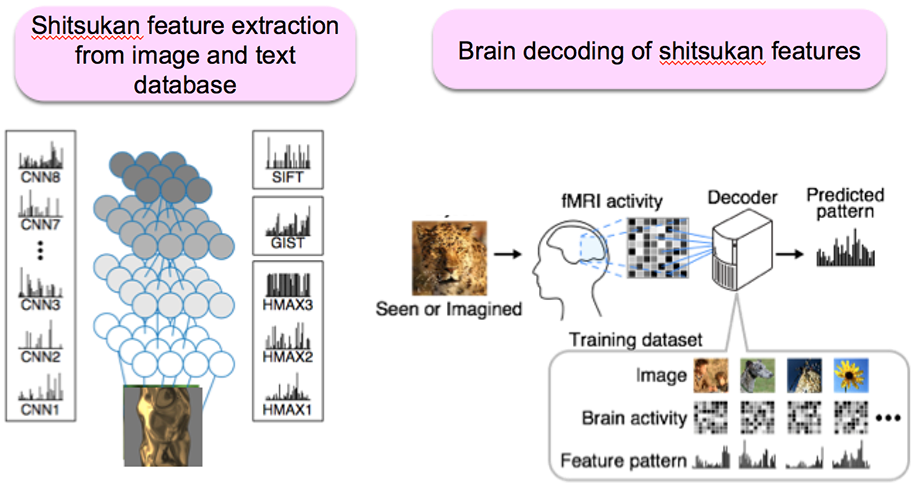

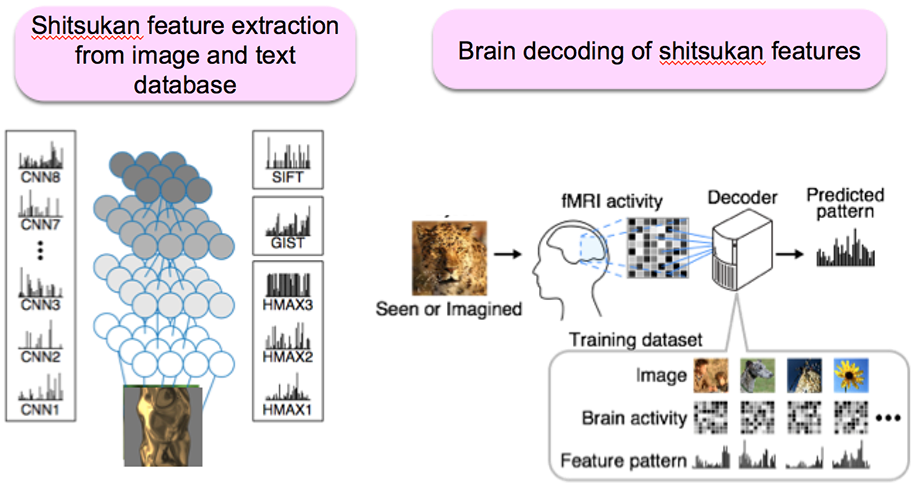

B01-2 Data-mining of shitsukan representation using brain, image, and text data

Principal Investigator : Yukiyasu Kamitani(Advanced Telecommunications Research Institute International / Kyoto University)

Principal Investigator

Advanced Telecommunications Research Institute International / Kyoto University

Yukiyasu Kamitani

Co-Investigator

Tomoyasu Horikawa (Advanced Telecommunications Research Institute International)

Kei Majima (Kyoto University)

Tatsuya Harada (The University of Tokyo)

Outline

Shitsukan may have a complex information structure related to both physical and conceptual attributes. The conventional approach that focuses on a small number of physical attributes may fail to fully characterize shitsukan information. In this project, we propose a discovery-oriented approach to shitsukan that combines brain decoding and feature extraction from image and text databases.

Achievements

- Sasaki KS, Kimura R, Ninomiya T, Tabuchi Y, Tanaka H, Fukui M, Asada YC, Arai T, Inagaki M, Nakzono T, Baba M, Kato D, Nishimoto S, Sanada TM, Tani T, Imamura K, Tanaka S, Ohzawa I: Supranormal orientation selectivity of visual neurons in orientation-restricted animals., Sci. Rep., Vol 5, Article 16712, (13 pages), 2015.

- Horikawa T, Kamitani Y: Hierarchical neural representations of dreamed objects revealed by brain decoding with deep neural network features, Frontiers in Computational Neuroscience, Vol.11, Article 4, 2017.

- Yanagisawa T,Fukuma R, Seymour B, Hosomi K, Kishima H, Shimizu T, Yokoi H, Hirata M, Yoshimine T, Kamitani Y, Saitoh Y: Induced sensorimotor brain plasticity controls pain in phantom limb patients, Nature Communications, Vol.7, Article 13209, 2016.

- Takemiya M, Majima K, Tsukamoto M, Kamitani Y: BrainLiner: A neuroinformatics platform for sharing time-aligned brain-behavior data, Frontiers in Neuroinformatics, Vol.10, Article 3, 2016.

- Nakahara K, Adachi K, Kawasaki K, Matsuo T, Sawahata H, Majima K, Takeda M, Suguyama S, Nakata R, Iijima A, Tanigawa H, Suzuki T, Kamitani Y, Hasegawa I: Associative-memory representations emerge as shared spatial patterns of theta activity spanning the primate temporal cortex, Nature Communications, Vol.7, No.11827, 2016.

- Horikawa T, Kamitani Y: Generic decoding of seen and imagined objects using hierarchical visual features, Nature Communications Vol.8, Article 5037, 2017.

- Abdelhack M, Kamitani Y: Sharpening of hierarchical visual feature representations of blurred images, eNeuro.

B01-3 Hierarchical Transformation of Shitsukan Information Representation in the Visual System

Principal Investigator : Izumi Ohzawa(Osaka University)

Principal Investigator

Osaka University

Izumi Ohzawa

Co-Investigator

Osaka University

Hiroshi Tamura

Co-Investigator

Osaka University

Kota Sasaki

Co-Investigator

Masato Okada (The University of Tokyo)

Kohei Motoya, Takashi Tsukada, Koki Shigekawa, Naoharu Iwai, Yunosuke Higashi (Osaka University)

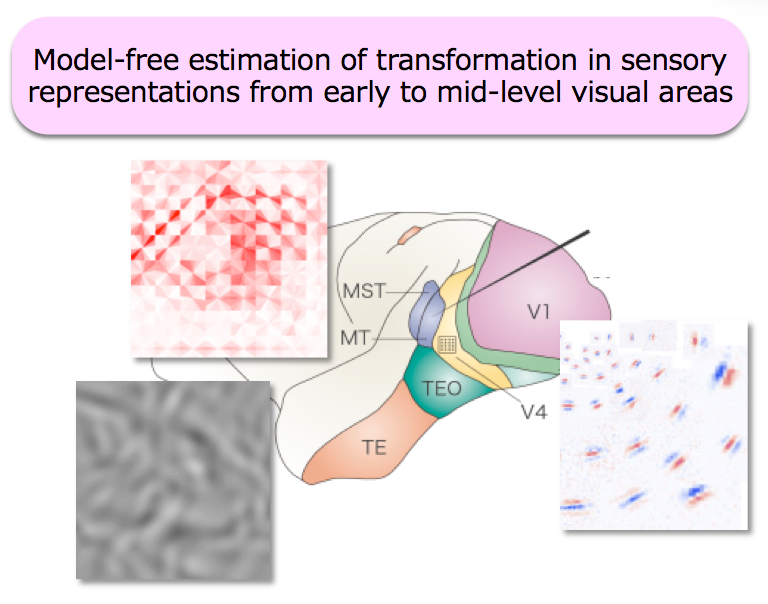

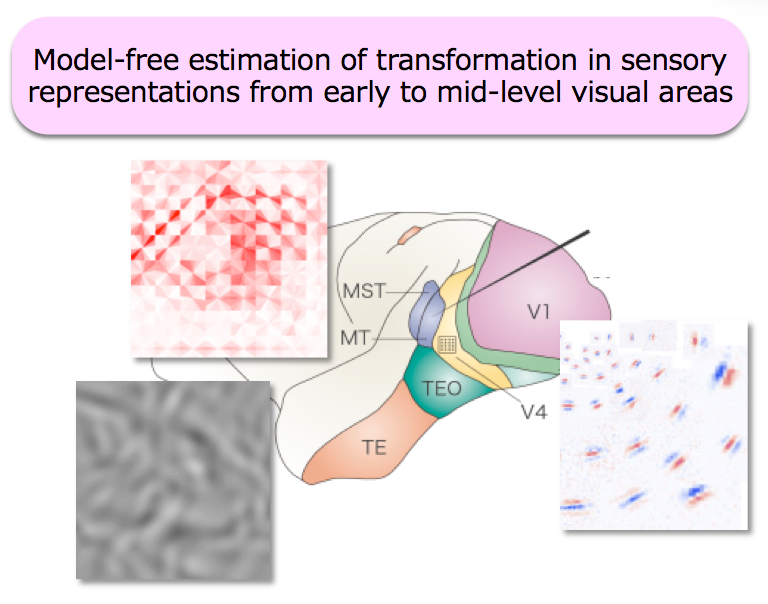

Outline

The primary objective of our research is to understand visual information processing related to "shitsukan" (perception of surface material and qualities) using data-driven approaches based on activities of single neurons in the brain. Electrophysiological and psychophysical methods are used. Since there are too many unknowns about details of processing at intermediate stages of the visual processing pathways, traditional methods based on a small number of hypotheses and models are applicable only in a limited manner. To overcome the limitation, data-driven approaches are needed. Specifically, new analytical methods and visual stimuli will be develped in order to reduce dependence of experiments on specific hypotheses and models. In addition to traditional stimuli, stimuli such as synthetic random stimuli based on elementary V1 signals are used. Neurons in V1 through mid-level visual areas in both the ventral and dorsal pathways will be studied.

Achievements

- Baba M, Sasaki KS, Ohzawa I: Integration of multiple spatial frequency channels in disparity-sensitive neurons in the primary visual cortex., J Neurosci., 5(27):10025-10038, 2015.

- Hasuike M, Ueno S, Minowa D, Yamane Y, Tamura H, Sakai K: Figure-Ground Segregation by a Population of V4 cells --- A Computational Analysis on Distributed Representation, 22nd International Conference on Neural Information Processing (ICONIP) 2015. Lecture Notes in Computer Science, Vol. 9490, pp.617-622, Istanbul, Turkey, 2015.

- Inagaki M, Sasaki KS, Hasimoto H, Ohzawa I: Subspace mapping of the three-dimensional spectral receptive field of macaque MT neurons., J Neurophysiol., 112 (2): 784-795, 2016.

- Kaneko H, Sano H, Hasegawa Y, Tamura H, Suzuki SS: Effects of forced movements on learning: findings from a choice reaction-time task in rats, Learning & Behavior, 10.3758/s13420-016-0255-9, 2017.

- Tamura H, Otsuka H, Yamane Y: Neurons in the inferior temporal cortex of macaque monkeys are sensitive to multiple surface features from natural objects, bioRxiv, 86157, 2016.

- Mochizuki Y, Onaga T, Shimazaki H, Shimokawa T, Tsubo Y, Kimura R, Saiki A, Sakai Y, Isomura Y, Fujisawa S, Shibata K, Hirai D, Furuta T, Kaneko T, Takahashi S, Nakazono T, Ishino S, Sakurai Y, Kitsukawa T, Lee JW, Lee H, Jung M, Babul C, Maldonado P, Takahashi K, Arce-McShane F, Ross C, Sessle B, Hatsopoulos N, Brochier T, Riehle A, Chorley P, Gruen S, Nishijo H, Ichihara-Takeda S, Funahashi S, Shima K, Mushiake H, Yamane Y, Tamura H, Fujita I, Inaba N, Kawano K, Kurkin S, Fukushima K, Kurata K, Taira M, Tsuitsui K, Ogawa T, Komatsu H, Koida K, Toyama K, Richmond B, Shinomoto S: Similarity in neuronal firing regimes across mammalian species, J Neurosci, 36(21):5736-5747, 2016.

- Kato D, Baba M, Sasaki KS, Ohzawa I: Effects of generalized pooling on binocular disparity selectivity of neurons in the early visual cortex, Phil. Trans. R. Soc. B, Vol 371, Article 20150266, (19 pages), 2016.

- Ito J, Yamane Y, Suzuki M, Maldonado P, Fujita I, Tamura H, Sonja Gruen: Switch from ambient to focal processing mode explains the dynamics of free viewing eye movements, Scientific Reports, Vol 7, Article: 1082, 2017.

B01-4 Shitsukan Information Based on Correspondences of Physics, Perception, and Affective Evaluations

Principal Investigator : Maki Sakamoto(University of Electro-Communications)

Principal Investigator

University of Electro-Communications

Maki Sakamoto

Co-Investigator

Toyohashi University of Technology

Shigeki Nakauchi

Co-Investigator

Junji Watanabe (NTT Communication Science Labs, Nippon Telephone and Telegraph Corp)

Ryo Watanabe, Yuji Nozaki, Tomohiko Inazumi, Naoharu Iwai, Yunosuke Higashi (University of Electro-Communications)

Kohei Motoya, Takashi Tsukada, Koki Shigekawa, Naoharu Iwai, Yunosuke Higashi (Toyohashi University of Technology)

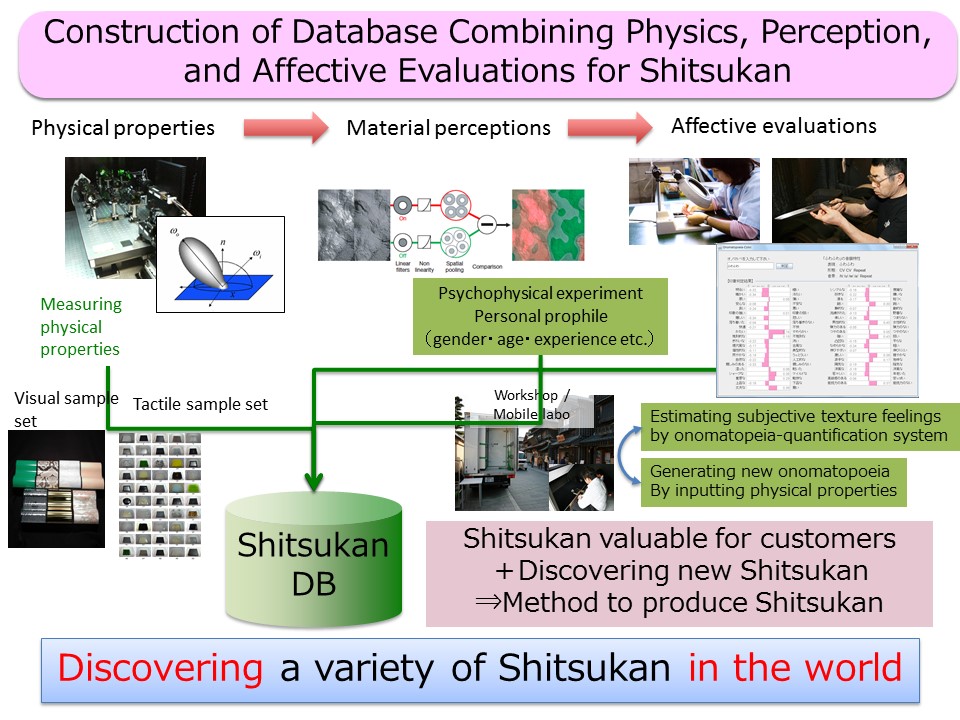

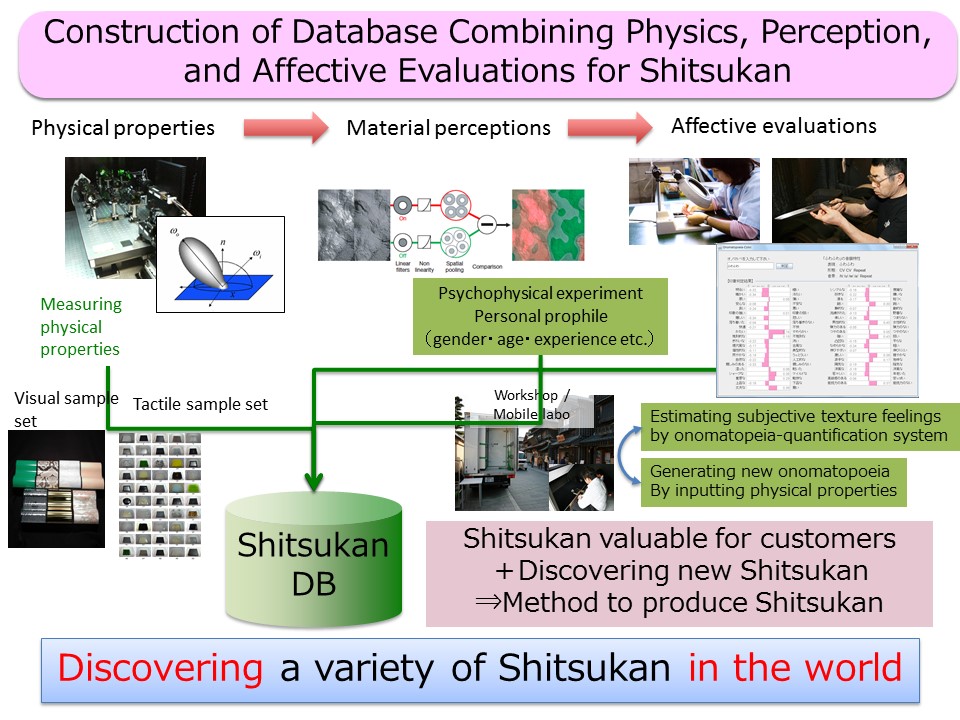

Outline

We construct Shitsukan database by exploring physical properties, material perceptions obtained by psychophysical experiments, affective evaluations described by language, which can be used as dataset for machine learning to build Shitsukan cognitive model or dataset for Shitsukan engineering. We also attempt to clarify Shitsukan cognitive mechanism how we perceive Shitsuan from materials and categorize it into onomatopoeia or linguitic sounds by analysing the relationship among physical properties, material perceptions, and subjective Shitsukan linguistic expressions. Furthermore, we construct a system which enables us to convert physical properties to onomatopoeia and vice versa.Finally, we want to contribute for developing new materials by generating onomatopoeia appropriate for physical or perceptual properties or discovering new Shitsukan using onomatopoeia.

Achievements

- Sakamoto M, Watanabe J: Cross-Modal Associations between Sounds and Drink Tastes/Textures: A Study with Spontaneous Production of Soud-Symbolic Words, Chemical Senses, Vol. 41, No. 3, pp. 197-203, 2015.

- Tamura H, Nakauchi S, Koida K: Robust brightness enhancement across a luminance range of the glare illusion, Journal of Vision, 16(1):1-13, 2016.

- Kusaba Y, Doizaki R, Sakamoto M: A Study on Effectiveness of Onomatopoeia in Expressing Users' Operational Feelings about HMIs, International Journal of Affective Engineering, 15(2):177-182, 2016.

- Mai Kosahara, Junji Watanabe, Yasuaki Hiranuma, Ryuichi Doizaki, Takahide Matsuda, Maki Sakamoto: A System to Visualize Tactile Perceptual Space of Young and Old People, AAAI 2016 Spring Symposium Series, 2016.

- Minh Vu, B., Urban, P., Tanksale, T. M., Nakauchi, S.: Visual perception of 3D printed translucent objects, Color Imaging Conference 24 (CIC24), 2016.

- Tamura, H., Higashi, H., Nakauchi, S.: Kinetic cue for perceptual discrimination between mirror and glass materials, The European Conference on Visual Perception (ECVP2016), 2016.

- Tsukuda, M., Bednarik, R., Hauta-Kasari, M., Nakauchi, S.: Static cues for mirror-glass discrimination explored by gaze distribution, The European Conference on Visual Perception (ECVP2016), 2016.

- Kosahara M, Watanabe J, Hiranuma Y, Doizaki R, Matsuda T, Sakamoto M: A System to Visualize Tactile Perceptual Space of Young and Old People, In Proceedings of 2016 AAAI Spring Symposium Series, 2016.

- Doizaki R, Watanabe J, Sakamoto M: Automatic Estimation of Multidimensional Ratings from a Single Sound-symbolic Word and Word-based Visualization of Tactile Perceptual Space, IEEE Transactions on Haptics, 99, 2016.

- Kwon J, Sakamoto M: Visualization of relation between sound symbolic word and perceptual characteristics of environmental sounds, American Institute of Physics (AIP) Conference Proceedings, 2016.

- Maki Sakamoto, Junji Watanabe: Exploring Tactile Perceptual Dimensions Using Materials Associated with Sensory Vocabulary, Frontiers in Psychology, 8:1-10, 2017.

- Hiranuma Y, Doizaki R, Shimotai K, Sato H, Iwamoto M, Okano D, Toriyabe S, Sakamoto M: Multiobjective Optimization of Outdoor Advertisements Focusing on Impression, Attention, and Memory, International Journal of Affective Engineering, 16(2), 2017.

- Maki Sakamoto, Junji Watanabe: Bouba/Kiki in Touch: Associations Between Tactile Perceptual Qualities and Japanese Phonemes, Frontiers in Psychology, 9(295), 2018.

C01 Shitsukan Innovation

C01-1 Development of Record and Replay Technology for Haptic Feeling

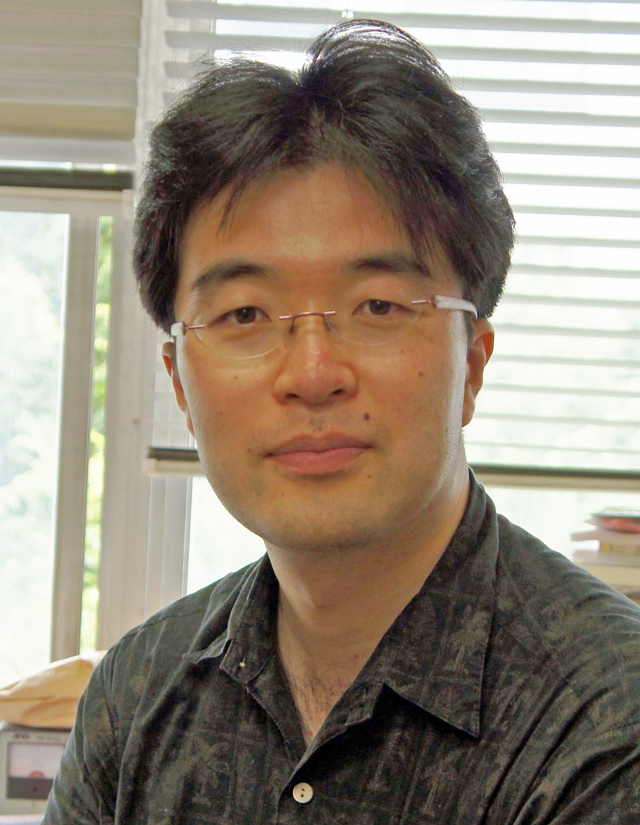

Principal Investigator : Hiroyuki Kajimoto(The University of Electro-Communications)

Principal Investigator

The University of Electro-Communications

Hiroyuki Kajimoto

Co-Investigator

Nagoya University

Shogo Okamoto

Co-Investigator

Satoshi Saga (Kumamoto University)

Yoshimune Nonomura (Yamagata University)

Outline

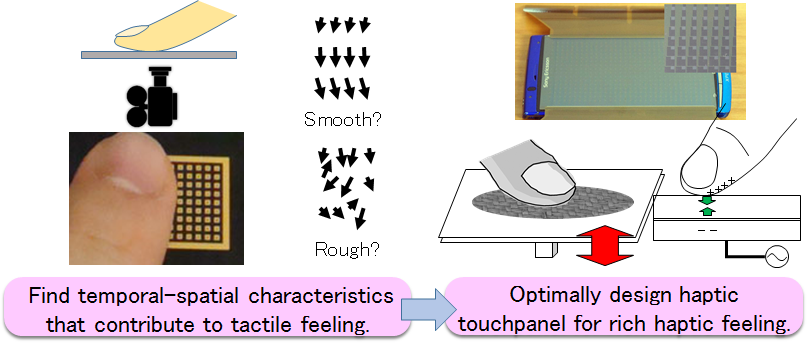

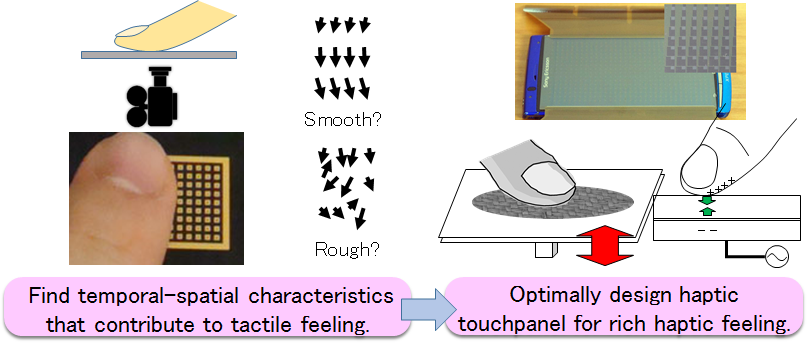

Haptic feeling is one of important factors for the design of commercial products, and it recently attracts attention in the field of mobile or tangible electronic devices. However, current haptic technologies could present limited haptic feeling, mainly because of the hardware that achieved limited temporal/spatial resolution, and limited knowledge of temporal-spatial cross-interactions that contribute to haptic feeling.

The purposes of this research project are twofold. First, temporal-spatial characteristics that contribute to tactile feeling are determined by observation based study, and they are validated by mechanical stimulation. Second, touchpanel-based haptic display is developed, optimally combining several stimulation methods to present rich haptic feeling.

Achievements

- Yohei Fujii, Shogo Okamoto, and Yoji Yamada: Friction model of fingertip sliding over wavy surface for friction-variable tactile feedback panel, Advanced Robotics, 30(20)1341-1353, 2016.

- Takumu Okada, Shogo Okamoto, and Yoji Yamada: Impulsive resistance force generated using pulsive damping brake of DC motor, IEEE International Conference on Systems, Man, and Cybernetics, 2016.

- Takahiro Shitara, Yuriko Nakai, Haruya Uematsu, Vibol Yem, Hiroyuki Kajimoto, Satoshi Saga: Reconsideration of Ouija Board Motion in Terms of Haptics Illusions, EuroHaptics 2016.

- Seitaro Kaneko, Hiroyuki Kajimoto: Method of Observing Finger Skin Displacement on a Textured Surface Using Index Matching, EuroHaptics 2016.

- Kosuke Higashi, Shogo Okamoto, and Yoji Yamada: What is the Hardness Perceived by Tapping?, EuroHaptics 2016.

- Kensuke Kidoma, Shogo Okamoto, Hikaru Nagano, and Yoji Yamada: Graphical modeling method of texture-related affective and perceptual responses, International Journal of Affective Engineering, 16(1)27-36, 2016.

- Hatem Elfekey, Hany Ayad Bastawrous, and Shogo Okamoto: A touch sensing technique using the effects of extremely low frequency fields on the human body, Sensors, 16(12)2049, 2016.

- Kosuke Higashi, Shogo Okamoto, and Yoji Yamada, Effects of mechanical parameters on hardness experienced by damped natural vibration stimulation, IEEE International Conference on Systems, Man, and Cybernetics, 2016.

- Shunsuke Sato, Shogo Okamoto, and Yoji Yamada: Wearable finger pad sensor for tactile textures using propagated deformation at finger side: Assessment of accuracy, IEEE International Conference on Systems, Man, and Cybernetics, 2016.

- Kosuke Higashi, Shogo Okamoto, Yoji Yamada, Hikaru Nagano, Masashi Konyo: Hardness perception by tapping: Effect of dynamic stiffness of objects, IEEE WorldHaptics 2017.

- Shitara T, Nakai Y, Uematsu H, Yem V, Kajimoto H: Reconsideration of Ouija Board Motion in Terms of Haptics Illusions (II) -Experiment with 1-DoF Linear Rail Device-, IEEE WorldHaptics 2017.

- Kameoka T, Takahashi A, Yem V, Kajimoto H: Quantification of Stickiness Using a Pressure Distribution Sensor, IEEE WorldHaptics 2017.

- Shunsuke Sato, Shogo Okamoto, Yoichiro Matsuura, and Yoji Yamada: Wearable finger pad deformation sensor for tactile textures in frequency domain by using accelerometer on finger side, Robomech Journal, 4, 19, 2017.

- Takumu Okada, Shogo Okamoto, and Yoji Yamada: Discriminability of virtual roughness presented by a passive haptic interface, Transactions on Human Interface Society, 20(2).

C01-2 Modeling and Rendering of Appearances of Complex Objects by Computer Graphics Techniques

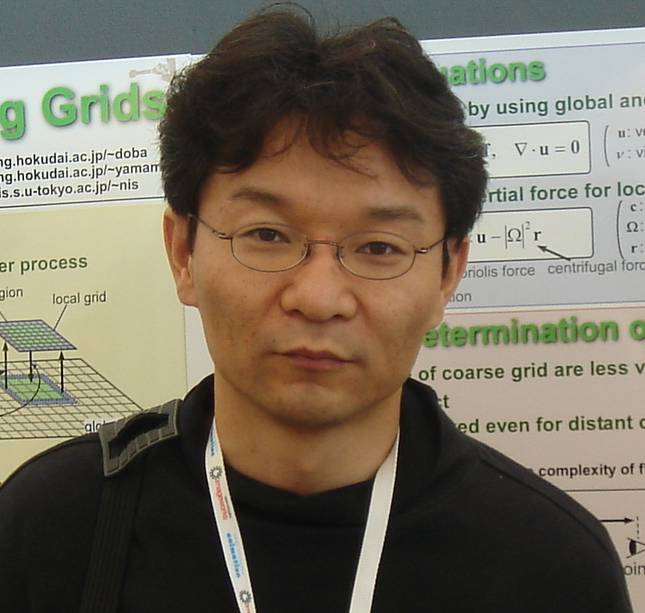

Principal Investigator : Yoshinori Dobashi(Hokkaido University)

Principal Investigator

Hokkaido University

Yoshinori Dobashi

Co-Investigator

Wakayama University

Kei Iwasaki

Co-Investigator

Shizuoka University

Makoto Okabe

Co-Investigator

Shibaura Institute of Technology

Takashi Ijiri

Co-Investigator

Chuo Gakuin University

Hideki Todo

Co-Investigator

Tsuyoshi Yamamoto (Hokkaido University)

Yoko Mizokami (Chiba University)

Outline

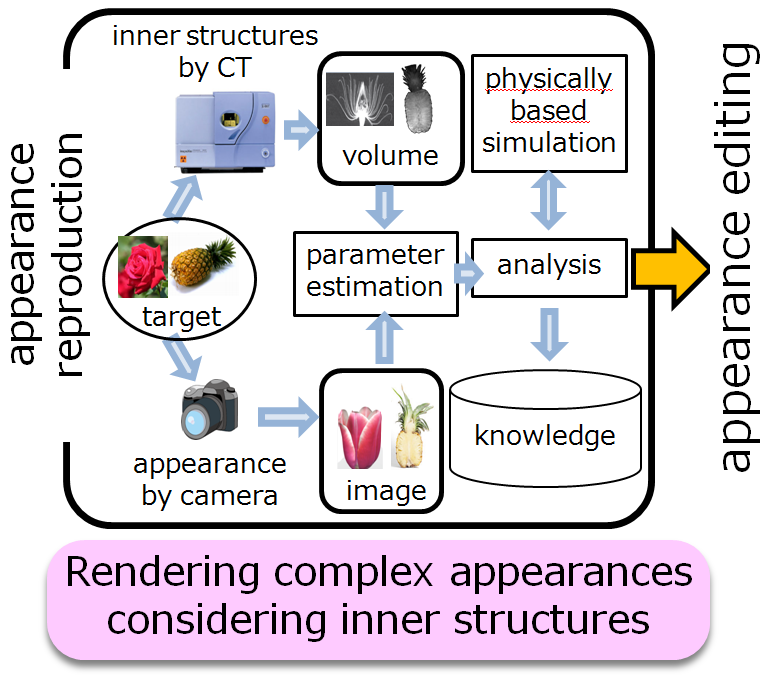

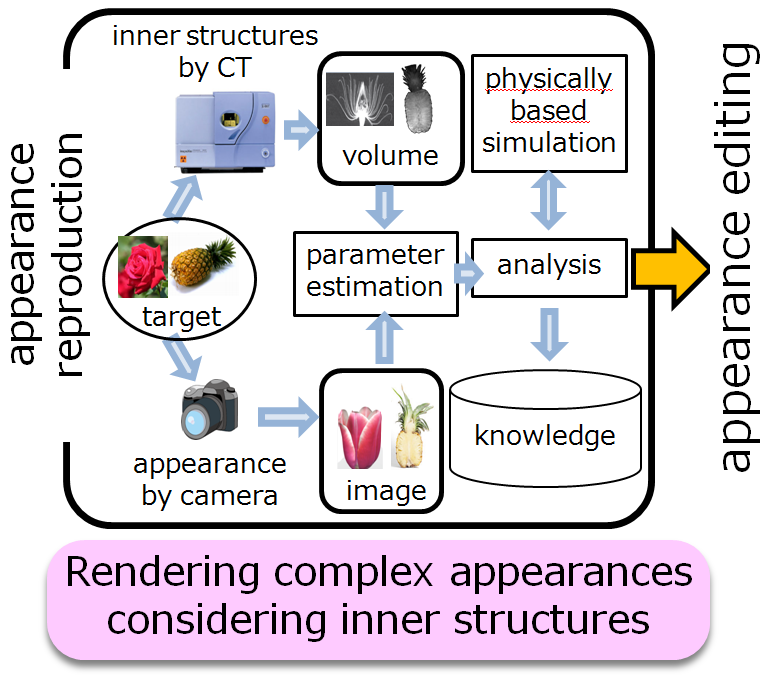

The goal of this project is to clarify the mechanism of material recognition and to find its engineering applications. We accelerate the research on the material recognition by using computer graphics techniques for virtually measuring some important factors that are impossible to be measured in the real world. Based on the recognition mechanism obtained by the scientific methods, we then develop novel appearance reproduction methods that are useful for practical applications. Unlike the previous methods for capturing and rendering object appearances, our approach treats a target object as a fully volumetric object by measuring its inner structures using computed tomography. By employing this approach, we aim at finding important factors for appearance reproduction that cannot be captured by the previous approach modeling the surface structures only. Furthermore, by making use of the fact that we acquire the volumetric structures, we extend our research to investigating the mechanism of material perception through sound and motion.

Achievements

- Yoshida H, Nabata K, Iwasaki K, Dobashi Y, Nishita T: Adaptive Importance Caching for Many-light Rendering, Journal of WSCG, 23(1):65-72, 2015.

- Nabata K, Iwasaki K, Dobashi Y, Nishita T: An Error Estimation Framework for Many-Light Rendering, Computer Graphics Forum, 35(7).

- Mikio Shinya, Yoshinori Dobashi, Michio Shiraishi, Motonobu Kawashima, Tomoyuki Nishita: Multiple Scattering Approximation in Heterogeneous Media, Computer Graphics Forum 35(7).

- Hideki T, Yasushi Y: Reflectance and Shape Estimation for Cartoon Shaded Objects, Pacific Graphics 2016 Short Paper.

- Hidetomo Kataoka, Takashi Ijiri, Jeremy White and Akira Hirabayashi: Acoustic probing to estimate freshness of tomato, Asia-Pacific Signal and Information Processing Association (APSIPA), 2016.

- Yoshinori Dobashi, Takashi Ijiri, Hideki Todo, Kei Iwasaki, Makoto Okabe, Satoshi Nishimura: Measuring Microstructures Using Confocal Laser Scanning Microscopy for Estimating Surface Roughness, Proceeding SIGGRAPH '16 ACM SIGGRAPH 2016 Posters, Article No. 28, Anaheim, Convention Center, 2016.

- Takamichi Kojima, Takashi Ijiri, Hidetomo Kataoka, Jeremy White, Akira Hirabayashi: CogKnife: Food Recognition From Their Cutting Sounds, 8th Workshop on Multimediafor Cooking and Eating Activities (ICME 2016内のシンポジウム), Seattle WA, 2016.

- Makoto Okabe, Yoshinori Dobashi, Ken Anjyo: Animating pictures of water scenes using video retrieval, The Visual Computer, 2016.

- Hideki T, Yasushi Y: Estimating reflectance and shape of objects from a single cartoon-shaded image, Computational Visual Media, 3(1), 2017.

- Muhammad A, Hideki T, Koji M, Kunio K: SPLIGHT: Lighting for Splat Based Rendering Towards Temporal Coherence, IEVC 2017.

- Yoshinori Dobashi, Takashi Ijiri, Hideki Todo: Estimating Surface Roughness for Realistic Rendering of Fruits, IEVC 2017.

- Syuhei Sato, Keisuke Mizutani, Yoshinori Dobashi, Tomoyuki Nishita, Tsuyoshi Yamamoto: Feedback Control of Fire Simulation based on Computational Fluid Dynamics, Computer Animation and Virtual Worlds Journal, 2017.

- Y. Dobashi, K. Iwasaki, M. Okabe, T. Ijiri, H. Todo: Inverse appearance modeling of interwoven cloth, The Visual Computer.

- Takashi Ijiri , Hideki Todo, Akira Hirabayashi, Kenji Kohiyama, Yoshinori Dobashi: Digitization of natural objects with micro CT and photographs, PLoS ONE, 3(4).

- J. Tsuruga, K. Iwasaki: Sawtooth Cycle Revisited, Computer Animation and Virtual World.

C01-3 Reproducing and Editing Material Properties on Real Object Surfaces by Programmable Illumination

Principal Investigator : Daisuke Iwai(Osaka University)

Principal Investigator

Osaka University

Daisuke Iwai

Co-Investigator

Hiroshima City University

Shinsaku Hiura

Co-Investigator

Daisuke Miyazaki, Yu Kawamoto (Hiroshima City University)

Makiko Yonehara (Kindai University)

Yuta Ito (Tokyo Institute of Technology)

Haruka Matsukura, Parinya Punpongsanon, Takafumi Hiraki (Osaka University)

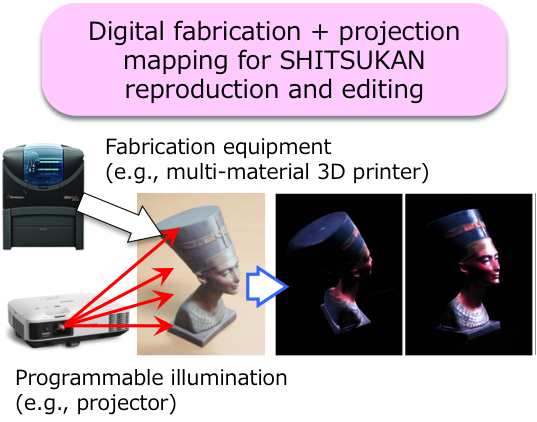

Outline

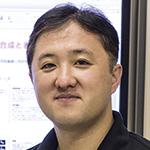

For understanding human recognition of material properties, we aim to realize a display which can computationally control and render various material appearances which are defined by complex and multidimensional reflectance properties or BTF (Bidirectional Texture Function). In this project, we develop a technology that can reproduce a desired BTF on a real object surface and edit it at a high flexibility. To achieve this goal, we particularly develop the following two technologies. First, we develop a calibration method of digital fabrication (D-Fab) equipment such as a milling machine and a multi-material 3D printer by applying computational photography techniques with programmable illumination. Using a calibrated D-Fab equipment, we can output a 3D object with a BTF which is close to a desired one. Second, we develop a spatially and angularly varying light projection system by which we edit the reflected light pattern from the surface of a D-Fab object. We apply computational display techniques to manipulate the light field in the space between the object and observers. Finally, we combine these two techniques to reproduce and edit material and surface qualities of real objects.

Achievements

- Grundhöfer A, Iwai D: Robust, Error-Tolerant Photometric Projector Compensation, IEEE Transactions on Image Processing, 24(12):5086-5099, 2015.

- Punpongsanon P, Iwai D, Sato K: SoftAR: Visually Manipulating Haptic Softness Perception in Spatial Augmented Reality, IEEE Transactions on Visualization and Computer Graphics, 21(11):1279-1288, 2015.

- Tsukamoto J, Iwai D, Kashima K: Radiometric Compensation for Cooperative Distributed Multi-Projection System through 2-DOF Distributed Control, IEEE Transactions on Visualization and Computer Graphics, 21(11):1221-1229, 2015.

- Asayama H, Iwai D, Sato K: Diminishable Visual Markers on Fabricated Projection Object for Dynamic Spatial Augmented Reality, In Proceedings of ACM SIGGRAPH ASIA Emerging Technologies, 2015.

- D. Miyazaki, T. Shigetomi, M. Baba, R. Furukawa, S. Hiura, N. Asada: Surface normal estimation of black specular objects from multiview polarization images, SPIE Optical Engineering.

- Miyazaki D, Nakamura M, Baba M, Furukawa R, Hiura S: Optimization of Illumination for Generating Metamerism, Journal of Imaging Science and Technology, 60(6): 60502:1-15, 2016.

- Miyazaki D, Shigetomi T, Baba M, Furukawa R, Hiura S, Asada N: Surface normal estimation of black specular objects from multiview polarization images, SPIE Optical Engineering, 56(4): 041303:1-17, 2016.

- Aoyama S, Iwai D, Sato K: Altering resistive force perception by modulating velocity of dot pattern projected onto hand, ACM Workshop on Multimodal Virtual and Augmented Reality, 2016.

- Takeda S, Iwai D, Sato K: Inter-reflection Compensation of Immersive Projection Display by Spatio-Temporal Screen Reflectance Modulation, IEEE Transactions on Visualization and Computer Graphics, 22(4):1424-1431, 2016.

- Tsukamoto J, Iwai D, Kashima K: Distributed Optimization Framework for Shadow Removal in Multi-Projection Systems, Computer Graphics Forum, 36(8): 369-379, 2017.

- Kitajima Y, Iwai D, Sato K: Simultaneous Projection and Positioning of Laser Projector Pixels, IEEE Transactions on Visualization and Computer Graphics, 23(11): 2419-2429, 2017.

- Bermano A, Billeter M, Iwai D, Grundhoefer A: Makeup Lamps: Live Augmentation of Human Faces via Projection, Computer Graphics Forum, 36(2): 311-323, 2017.

- Hamasaki T, Itoh Y, Hiroi Y, Iwai D, Sugimoto M: HySAR: Hybrid Material Rendering by an Optical See-Through Head-Mounted Display with Spatial Augmented Reality Projection, IEEE Transactions on Visualization and Computer Graphics, 24(4): 1457-1466, 2018.

- Asayama H, Iwai D, Sato K: Fabricating Diminishable Visual Markers for Geometric Registration in Projection Mapping, IEEE Transactions on Visualization and Computer Graphics, 24(2): 1091-1102, 2018.

C01-4 Technologies for Analyzing, Controlling and Managing Information on Multimodal Material Perception in the Real World

Principal Investigator : Katsunori Okajima(Yokohama National University)

Principal Investigator

Yokohama National University

Katsunori Okajima

Co-Investigator

Chiba University

Takahiko Horiuchi

Co-Investigator

Chiba University

Shoji Tominaga

Co-Investigator

Norihiro Tanaka (Nagano University)

Katsuyoshi Hoshino, Keta Hirai, Midori Tanaka (Chiba University)

Shogo Nishi (Osaka Electro-Communication University)

Masahito Nagata, Masahiko Yamakawa (Yokohama National University)

Seichi Tsujimura (Nagoya City University)

Shino Okuda (Doshisha Women's College of Liberal Arts)

Toki Li, Shuhei Watanabe, Wei Li (Doshisha Women's College of Liberal Arts)

Outline

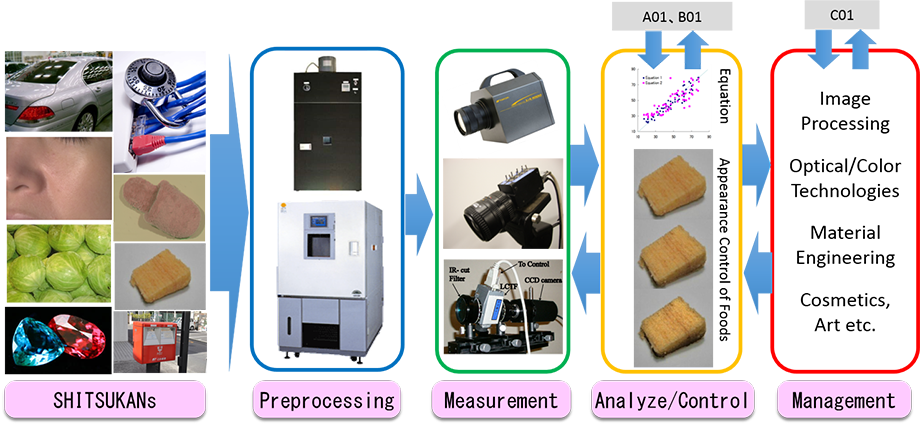

Outline

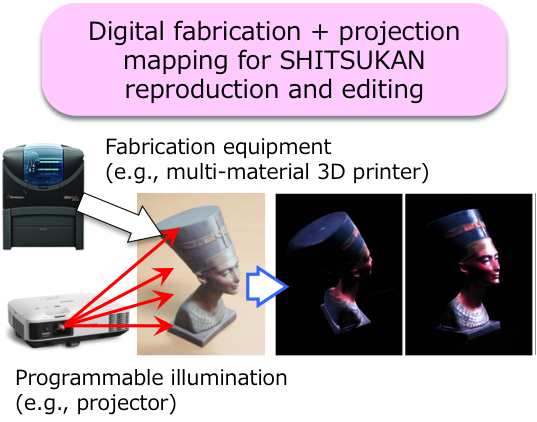

We aim to establish "SHITSUKAN (Material Perception) engineering" as an academic system of SHITSUKAN management useful in industrial manufacturing sites, based on scientific understanding of material perception. "SHITSUKAN engineering" enables to control SHITSUKAN directly by operating physical parameters of stimuli by understanding information processing in our brain. For such a purpose, we will merge research results in the present research area, and apply the knowledge to solve various challenges in the real world. In addition, we will model and formulate SHITSUKAN mechanisms by analyzing SHITSUKAN information from many directions systematically. Finally, we will establish the comprehensive engineering system by which optical SHITSUKAN can be controlled and managed even in terms of wavelength dimension. From 2017, we will try to develop automatic measurement systems, analyzing systems, managing systems for optical SHITSUKAN information and to structure multimodal SHITSUKAN engineering by considering the five senses in cooperation with publicly-offered projects.

Achievements

- Shino Okuda, Katsunori Okajima, Carlos Arce-Lopera: Visual Palatability of Food Dishes in Color Appearance, Glossiness and Convexo-concave Perception Depending on Light Source, Journal of Light & Visual Environment, 39, 8-14, 2016.

- Hirai K, Irie D, Horiuchi T: Multi-primary Image Projector using Programmable Spectral Light Source, Journal of the Society for Information Display, 24(3):144-153, 2016.

- Sanae Yoshimoto, Katsunori Okajima, Tatsuto Takeuchi: Motion Perception under Mesopic Vision, Journal of Vision, 16(1):16, 1-15, 2016.

- Tominaga S, Kato K, Hirai K, Horiuchi T: Bispectral Interreflection Estimation of Fluorescent Objects, In Proceedings of Color and Imaging Conference, 2015.

- Saito Y, Hirai K, *Horiuchi T: Construction of Manga Materials Database for Analyzing Perception of Materials in Line Drawings, In Proceedings of Color and Imaging Conference, 2015.

- Hirai K., Nakahata R., Horiuchi T: Measuring Spectral Reflectance and 3D Shape using Multi-primary Image Projector, In Proceedings of Multispectral Colour Science, 2016.

- Sole A., Farup I., Tominaga S: Image Based Reflectance Measurement Based on Camera Spectral Sensitivities, In Proceedings of IS&T Electronic Imaging, 2016.

- Tominaga S, Dozaki S, Kuma Y, Hirai K: Modeling and Estimation for Surface-Spectral Reflectance of Watercolor Paintings, In Proceedings of IS&T Electronic Imaging, 2016.

- Tanaka M, Horiuchi T.: Appearance harmony of materials using real objects and displayed images, Journal of the International Colour Association, 15: 3-18, 2016.

- Tominaga S, K.Kato, Hirai K, Horiuchi T.: Spectral image analysis of mutual illumination between florescent objects, The Journal of the Optical Society of America A, 33(8): 1476-1487, 2016.

- Spence C, Okajima K, Cheok AD, Petit O, Michel C: Eating with our eyes: From visual hunger to digital satiation, Brain and Cognition, 110, 53-63, 2016.

- Imura T, Masuda T, Wada Y, Tomonaga M, Okajima K: Chimpanzees can visually perceive differences in the freshness of foods, Scientific Reports, 6, 34685, 2016.

- Shino Okuda, Katsunori Okajima: Effects of Spectral Component of Light on Appearance of Skin of Woman's Face with Make-up, Journal of Light & Visual Environment, 40, 20-27, 2017.

- Nishizawa M, Wanting J, Okajima K: Projective-AR System for Customizing the Appearance and Taste of Food Interaction, In Proceedings of 18th International Conference on Multimodal Interaction、MVAR2016-Article#6, 2016.

- S.Yamaguchi, K.Hirai and T.Horiuchi: Video Smoke Removal based on Smoke Imaging Model and Space-Time Pixel Compensation, In Proceedings of Computational Color Imaging Workshop, 2017.

- T.Kiyotomo, K.Hoshino, Y.Tsukano, H.Kibushi, T.Horiuchi: Edge-Preserving Error Diffusion for Multi-Toning Based on Dual Quantization, In Proceedings of IS&T Electronic Imaging, 2017.

- K.Hirai, W.Suzuki, Y.Yamada and T.Horiuchi: Interactive Object Surface Retexturing using Perceptual Quality Indexes, In Proceedings of IS&T Electronic Imaging, 2017.

- S.Tominaga, K.Kato, K.Hirai and T.Horiuchi: Appearance Decomposition and Reconstruction of Textured Fluorescent Objects, In Proceedings of IS&T Electronic Imaging, 2017.

- Okuda S, Okajima K: Color Design of Mug with Green Tea for Visual Palatability, International Association of Societies of Design Research 2015, 2016.

- Horiuchi T., Zheng Q., Hirai K.: Memory Effects in gold material perception, In Proceedings of CIE Expert Symposium on Colour and Visual Appearance, in press.

- Tanaka M., Horiuchi T.: Physical index for judging appearance harmony of materials, In Proceedings of CIE Expert Symposium on Colour and Visual Appearance, in press.

- Tominaga S., Kato K., Hirai K. Horiuchi T.: Spectral Image Analysis and Appearance Reconstruction of Fluorescent Objects under Different Illuminations, In Proceedings of CIE Expert Symposium on Colour and Visual Appearance, in press.

- Tanaka M., Horiuchi T.: Perception of Gold Materials by Projecting Solid Colour on Black Materials, In Proceedings of AIC2014 Interim Meeting, in press.

- Tominaga S., Kato K., Hirai K. Horiuchi T.: Spectral Image Analysis of Florescent Objects with Mutual Illumination, In Proceedings of Color and Imaging Conference, in press.

- Katsunori Okajima, Miki Yonezawa: Effects of Color Distribution on the Impression of Facial Skin, European Conference on Visual Perception 2016 (ECVP2016), 2016.

- Masahiko Yamakawa, Sei-ichi Tsujimura, Katsunori Okajima: Contribution of ipRGC to the brightness perception, Asia Pacific Conference on Vision 2016 (APCV2016), 2016.

- Katsunori Okajima, Jiang Wanting, Masahiro Nishizawa: Control of crossmodal effects of food appearance using a projective-AR system, Sensometrics 2016, 2016.

- Katsunuma T, Hirai K, Horiuchi T.: Perceptual Dependencies between Texture and Color in Fabric Appearance, In Proceedings of IS&T Electronic Imaging, 2016.

- Tanaka M, Horiuchi T.: Physical Indices for Judging Appearance Harmony of Materials, Color Research and Application, 42(6), 788-798, 2017.

- Doi M, Kimachi A, Nishi S, Tominaga S: Estimation of Local Skin Properties from Spectral Images, Journal of Imaging Science and Technology, in press.

- Katsunuma T, Hirai K, Horiuchi T: Fabric Appearance Control System for Example-based Interactive Texture and Color Design, ACM Transactions on Applied Perception, 14(3) 2017.

- Tanaka M., Horiuchi T., Otani K., Hung P.: Evaluation for faithful reproduction of star fields in a planetarium, Journal of Imaging Science and Technology, 61(6): 060401-1-060401-12, 2017.

- Martínez-Domingo M.A., Valero E.M., Hernández-Andrés J., Tominaga S., Horiuchi T., Hirai K.: Image Processing Pipeline for Segmentation and Material Classification based on Multispectral High Dynamic Range Polarimetric Images, Optics Express, 25(24): 30073-30090, 2017.

- S. Nishi, A. Kimachi, M. Doi, S. Tominaga: Image Registration for a Multispectral Imaging System Using Interference Filters and Application to Digital Archiving of Art Paintings, Color Culture and Science, 7: 59-68, 2017.

- Tanaka M, Arai R., Horiuchi T.: PuRet:Material Appearance Enhancement Considering Pupil and Retina Behaviors, Journal of Imaging Science and Technology, 61(4): 040401-1-040401-8, 2017.

- Horiuchi T., Saito Y., Hirai K.: Analysis of Material Representation of Manga Line Drawings using Convolutional Neural Networks, Journal of Imaging Science and Technology, 61(4): 040404-1-040404-10, 2017.

- M. Melgosa, N. Richard, C. Fernández-Maloigne, K. Xiao, H. de Clermont-Gallerande, S. Jost-Boissard, K. Okajima: Colour differences in Caucasian and Oriental women's faces illuminated by white LED sources, International Journal of Cosmetic Science.

- Hirai K., Osawa N., Hori M., Horiuchi T., Tominaga S.: High-Dynamic-Range Spectral Imaging System for Omnidirectional Scene Capture, Journal of Imaging, 4(53): 1-20, 2018.

- Tominaga S., Hirai K., Horiuchi T.: Estimation of fluorescent Donaldson matrices using a spectral imaging system, Optics Express, 26(2): 2132-2148, 2018.